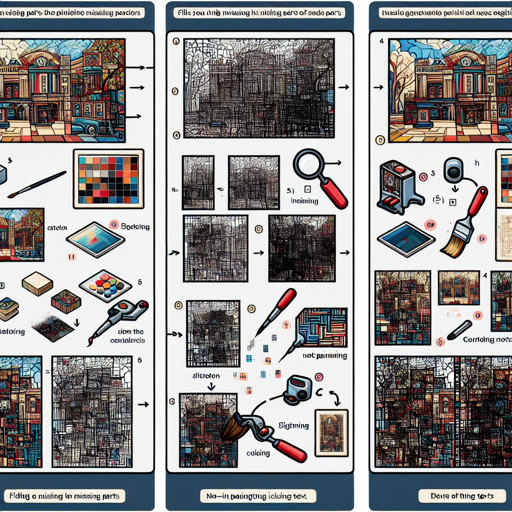

Have you ever wished to enhance your images by filling in missing parts, or even generating entirely new images based on predefined inputs? With the SD3 ControlNet Inpainting model, you can do just that! Let’s dive into the steps to get you started.

What is SD3 ControlNet Inpainting?

SD3 ControlNet Inpainting is a fine-tuned model that allows users to manipulate images by inpainting—essentially filling in areas of an image while maintaining the characteristics and context of the surrounding pixels. Think of it as a virtual artist who can seamlessly blend new elements into your artwork without compromising its integrity. It uses the robust capabilities of the SD3 model to ensure aesthetic and refined results.

Advantages of Using SD3 ControlNet Inpainting

- Maintains the integrity of non-inpainting regions—including text.

- Can generate text through the inpainting process.

- Demonstrates superior performance in generating aesthetically pleasing portraits.

Getting Started with SD3 ControlNet Inpainting

Step 1: Upgrade Diffusers

Before using SD3, ensure you have the latest version of the Diffusers library:

pip install -U diffusersStep 2: Download Required Python Files

Download the necessary Python files from GitHub. These files will be essential to run the model efficiently.

Step 3: Set Up Your Environment

Run the following code snippet after downloading the required files. This code loads and initializes the model:

from diffusers.utils import load_image, check_min_version

import torch

# Load model

from controlnet_sd3 import SD3ControlNetModel

from pipeline_stable_diffusion_3_controlnet_inpainting import StableDiffusion3ControlNetInpaintingPipeline

check_min_version("0.29.2")

# Build model

controlnet = SD3ControlNetModel.from_pretrained(

"alimama-creative/SD3-Controlnet-Inpainting",

use_safetensors=True,

extra_conditioning_channels=1,

)

pipe = StableDiffusion3ControlNetInpaintingPipeline.from_pretrained(

"stabilityai/stable-diffusion-3-medium-diffusers",

controlnet=controlnet,

torch_dtype=torch.float16,

)

pipe.text_encoder.to(torch.float16)

pipe.controlnet.to(torch.float16)

pipe.to("cuda")

# Load images

image = load_image("https://huggingface.co/alimama-creative/SD3-Controlnet-Inpainting/resolve/main/images/dog.png")

mask = load_image("https://huggingface.co/alimama-creative/SD3-Controlnet-Inpainting/resolve/main/images/dog_mask.png")

# Set parameters

width = 1024

height = 1024

prompt="A cat is sitting next to a puppy."

generator = torch.Generator(device="cuda").manual_seed(24)

# Run inference

res_image = pipe(

negative_prompt='deformed, distorted, disfigured, poorly drawn, bad anatomy, wrong anatomy, extra limb, missing limb, floating limbs, mutated hands and fingers, disconnected limbs, mutation, mutated, ugly, disgusting, blurry, amputation, NSFW',

prompt=prompt,

height=height,

width=width,

control_image=image,

control_mask=mask,

num_inference_steps=28,

generator=generator,

controlnet_conditioning_scale=0.95,

guidance_scale=7,

).images[0]

res_image.save(f'sd3.png')Understanding the Code

Imagine you are an artist preparing your canvas:

- First, you check that you have the right tools (the Diffusers library).

- You then gather the necessary paints (the Python files) to enhance your artwork.

- Next, you carefully sketch out your image (loading the model and images).

- Finally, you apply the colors (running the inference) to bring your vision to life, ensuring everything blends seamlessly.

Troubleshooting

If you encounter issues while running the SD3 ControlNet Inpainting, consider these troubleshooting tips:

- Ensure that all file paths are correct and the images are accessible.

- Check if your CUDA setup is compatible and functioning properly.

- Make sure you are using the required version of the Diffusers library.

- If you still face problems, you can always reach out for more support or ideas.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Training Details

The SD3 ControlNet model was trained on a substantial dataset featuring various images, optimizing the performance for high-resolution outputs. Keep in mind the following:

- Trained on 12M laion2B and internal images.

- Learning rate of 1e-4 and a batch size of 192.

- It performs best at a resolution of 1024×1024.

Limitations

Due to the training only being at 1024×1024 pixel resolution, results may vary significantly at different dimensions. Future updates are planned to address this issue.

Final Thoughts

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.