In today’s blog, we will discuss how to fine-tune an image classification model using the Food101 dataset with the PyTorch framework. We will dive into the essential components, metrics involved, and troubleshoot common issues that may arise during this process.

Understanding the Model and Its Performance

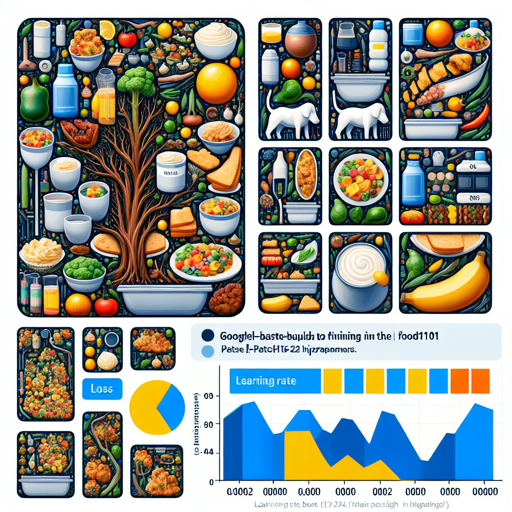

The fine-tuned model is based on googlevit-base-patch16-224-in21k. Upon evaluation, this model achieved an impressive accuracy of approximately 89.13% on the Food101 dataset.

The performance can be summarized as follows:

- Loss: 0.4501

- Accuracy: 0.8913

Training Procedure and Hyperparameters

The training process is crucial for achieving high accuracy. We utilized the following hyperparameters during training:

- Learning Rate: 0.0002

- Train Batch Size: 128

- Eval Batch Size: 128

- Seed: 1337

- Optimizer: Adam (betas=(0.9, 0.999), epsilon=1e-08)

- Learning Rate Scheduler Type: Linear

- Number of Epochs: 5

- Mixed Precision Training: Native AMP

Model Result Summary

Below is a table summarizing the training loss, validation loss, and accuracy at each epoch:

Epoch | Step | Validation Loss | Accuracy

--------|-------|----------------|---------

1.0 | 592 | 0.6070 | 0.8562

2.0 | 1184 | 0.4947 | 0.8691

3.0 | 1776 | 0.4876 | 0.8747

4.0 | 2368 | 0.4639 | 0.8857

5.0 | 2960 | 0.4501 | 0.8913

Analogy: Understanding Model Fine-tuning

Think of fine-tuning a machine learning model like training a chef to perfect a recipe. Initially, the chef has a basic understanding of cooking (the pre-trained model), but each practice session (training epochs) enhances their skills. With each attempt (epoch), the chef refines the flavors (adjusts weights and hyperparameters) and perfects the dish (achieves better accuracy). Just as a chef benefits from feedback (evaluation metrics), the model uses validation loss and accuracy to improve its performance.

Troubleshooting Common Issues

Throughout the process of fine-tuning, you may encounter some challenges. Here are some common troubleshooting tips:

- Issue: The model is not converging.

- Solution: Check the learning rate; sometimes lowering it can help.

- Issue: Overfitting observed with high training accuracy but low validation accuracy.

- Solution: Consider using techniques like dropout or early stopping.

- Issue: Slow training speed.

- Solution: Increase the batch size if memory allows or optimize your data pipeline.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Conclusion

With the guidance provided in this article, you should have a clearer understanding of how to fine-tune the Food101 model using PyTorch. Remember, training a model is as much an art as it is a science—patience and careful tuning will yield the best results!

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.