In this article, we'll delve into the usage of the RoBERTa model pre-trained on the Coptic Scriptorium Corpora for the tasks of POS-tagging and dependency parsing. Specifically, we will cover its implementation and provide troubleshooting ideas for users to overcome...

How to Leverage RoBERTa for Japanese Token Classification

When it comes to understanding languages and their complexities, Natural Language Processing (NLP) has been a game-changer. In this article, we will guide you through using the RoBERTa model pre-trained on 青空文庫 texts for tasks such as Part-Of-Speech (POS) tagging and...

How to Use the RoBERTa Model for Japanese Token Classification

If you are looking to enhance your Japanese language processing capabilities, the RoBERTa model pre-trained on texts for POS-tagging and dependency parsing is the tool for you. In this article, we will walk you through how to make the most out of this powerful model,...

How to Use RoBERTa for Thai Token Classification in NLP

In the bustling world of Natural Language Processing (NLP), the ability to effectively classify tokens in text is crucial. Today, we will explore how to use the pre-trained RoBERTa model specifically tailored for the Thai language, known as...

How to Implement the RoBERTa Model for Serbian POS-Tagging and Dependency Parsing

Welcome to your guide on utilizing the powerful RoBERTa model for Serbian text analysis! This article will walk you through the steps needed to set up and use the model effectively, ensuring you can perform POS-tagging and dependency parsing in both Cyrillic and Latin...

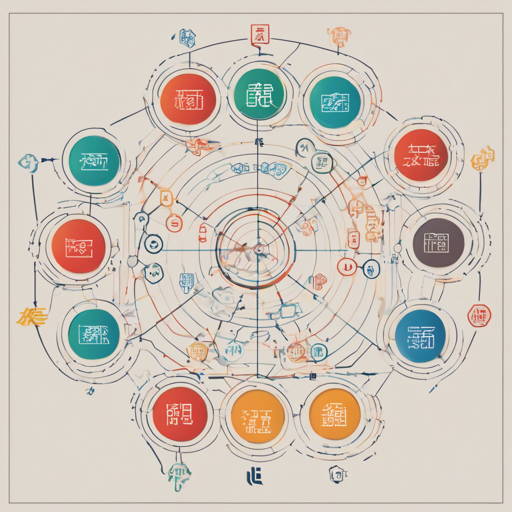

How to Implement and Utilize the Chinese RoBERTa Base UPOS Model for Token Classification

In the world of natural language processing (NLP), understanding syntactic structures is crucial for many applications. If you're looking to enhance your applications with the ability to categorize tokens effectively, you've come to the right place! In this article,...

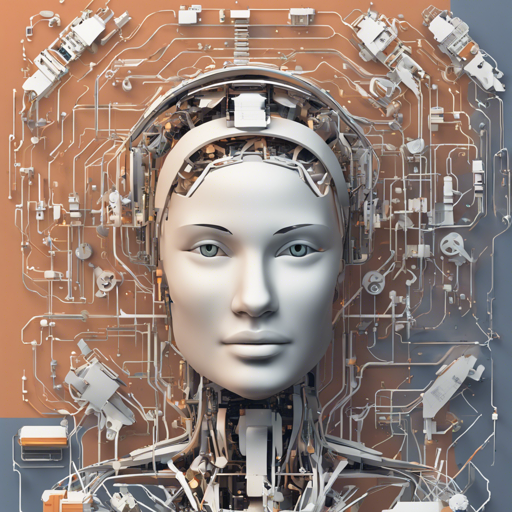

Utilizing the BERT-Large-German-UPOS Model for Token Classification

In the world of natural language processing (NLP), understanding the structure of language is key to creating effective AI systems. One such sophisticated tool for tackling this challenge is the BERT-Large-German-UPOS model, tailored for part-of-speech (POS) tagging...

How to Use Koichi Yasuoka’s BERT Model for Japanese Token Classification

In the world of Natural Language Processing (NLP), being able to dissect and analyze text is crucial, especially for languages as nuanced as Japanese. The Koichi Yasuoka's BERT model, trained on texts from the Japanese Wikipedia, provides an excellent tool for...

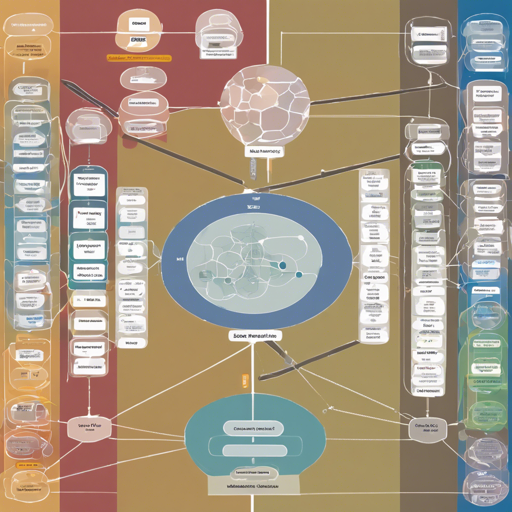

Unlocking the Power of Classical Chinese with RoBERTa

As we journey through the intricate world of Classical Chinese literature, we encounter the nuances of language that evoke deep emotions and complex ideas. Recently, a remarkable tool has emerged to assist researchers and linguists—a RoBERTa model specifically trained...