Are you ready to dive into the fascinating world of image classification using deep learning? In this blog post, we’ll explore a project utilizing the DeepGH dataset to classify anime character hair lengths. Think of it as teaching a computer to recognize various hair lengths in the colorful universe of anime.

What is DeepGH?

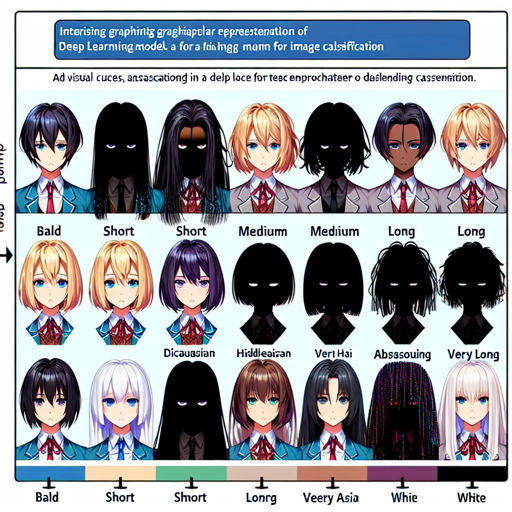

DeepGH stands for “Deep Graphics Hair” and is a dataset specifically designed for classifying hair length in anime characters. It provides a rich set of images along with annotations that allow models to learn from this artistic domain. Each image in the dataset depicts characters with varying hair lengths, categorized as: bald, very short, short, medium, long, very long, and absurdly long.

Understanding the Model Performance

To classify images, several models have been trained on the DeepGH dataset, each with its own characteristics and performance metrics like FLOPS, parameters, accuracy, and more. Let’s break down the data of these models using an analogy.

An Analogy for Understanding Model Metrics

Imagine you’re hosting a cooking competition where different chefs (models) prepare dishes (classifications) to impress the judges (evaluation metrics).

- FLOPS (Floating Point Operations per Second): Think of this as the complexity of the dish. A dish with higher FLOPS requires more ingredients and complicated techniques, just like a model that performs many calculations.

- Params (Parameters): This is akin to the number of ingredients used by the chef. More ingredients (parameters) can lead to a more flavorful dish (performance) but also could complicate the recipe.

- Accuracy: This represents how often the chefs impress the judges with their dishes. Higher accuracy means the chefs are getting more dishes right.

- AUC (Area Under Curve): This is a measure of the overall quality of the dishes. A higher AUC means better quality across all judges’ opinions.

Model Metrics Overview

Here are the metrics for various models used in classifying hair length:

Model Name FLOPS Params Accuracy AUC

---------------------------------------------------------------

caformer_s36_raw 22.10G 37.22M 66.85% 0.9133

caformer_s36_v0 22.10G 37.22M 68.60% 0.9095

mobilenetv3_v0_dist 0.63G 4.18M 65.91% 0.9026

Visualizing Confusion

Confusion matrices are useful for analyzing how well a model performs across different categories. They tell you where the model excelled and where it stumbled. The confusion matrices’ links for each model can be accessed here:

- Caformer S36 Raw Confusion Matrix

- Caformer S36 V0 Confusion Matrix

- MobileNetV3 V0 Dist Confusion Matrix

Troubleshooting Tips

If you encounter any issues while working with the DeepGH dataset or models, here are some troubleshooting ideas to consider:

- Model Not Converging: Ensure that you have the right parameters set. Check if the learning rate needs adjustment.

- Poor Accuracy: This could be due to insufficient training data or model complexity. Try augmenting your dataset or using a different model architecture.

- Confusion During Evaluation: Validate that you are using the correct confusion matrix for your model. Cross-check the model performance metrics for clarity.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.

Conclusion

By understanding the complexities of hair length classification and the models’ performance metrics, you can harness the power of deep learning to explore various creative domains. With the right practices, you too can build impressive models that recognize and classify intricacies in images, like those found in the anime world!