In the ever-evolving world of artificial intelligence, stability in model performance can often feel like walking a tightrope. Innovations bring about new models, and while they promise more stability, understanding their intricacies is vital. In this article, we will explore the very experimental models, namely, **mm-Stabilized_mid** and **mm-Stabilized_high**, and how they stack against their base model.

Understanding the Models

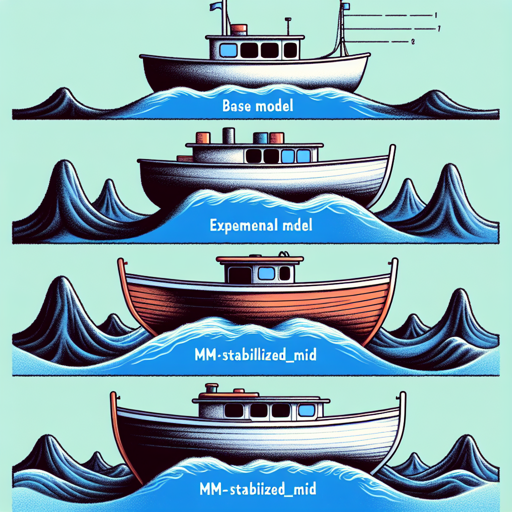

Before jumping into how to utilize these models, it’s essential to grasp what makes them tick. To put it simply, think of these models like boats on a vast ocean.

- Base Model: Imagine a standard boat, navigating the waves. It can move freely but gets tossed around by the waves, showcasing unstable performance.

- mm-Stabilized_mid: This boat has stabilizers installed, making it less susceptible to the turbulent sea. It still moves but with a bit of assuredness.

- mm-Stabilized_high: Now, picture a sturdier boat that has even more stabilizers. However, the price to pay for this increased stability is that it loses some of its agility. The movements become more restricted, providing a smoother but less dynamic experience.

How to Utilize These Experimental Models

Using these models may seem straightforward, but a user-friendly approach will help you maximize their potential:

- **Select the Model**: Depending on your needs, you can experiment with either mm-Stabilized_mid for moderate stability or mm-Stabilized_high for maximum steadiness.

- **Prepare Your Data**: Ensure that your data is preprocessed adequately to avoid adding noise. Clean, concise data will help the model perform better.

- **Model Training**: Utilize your preferred programming language or framework to integrate the selected model. Train it using your preprocessed data.

- **Evaluate Results**: Post-training, it’s essential to evaluate the model’s performance. Check for stability in results versus the base model.

- **Iterate and Improve**: The experimental nature of these models means that tweaking and iteration might lead to better outcomes. Experiment with different parameters and data sets!

Troubleshooting Common Issues

While experimenting with new models, you may encounter some challenges along the way. Here are some troubleshooting tips:

- Model Overfitting: If you notice that your model performs exceptionally well on training data but poorly on new data, consider reducing the complexity of the model or increasing your dataset.

- Stability Issues: If the model exhibits unexpected behavior, it might be due to the dataset’s nature. Ensure your input is clean and representative of real-world scenarios.

- Performance Too Low: It could be beneficial to revisit your feature engineering process or modify hyperparameters. Sometimes, minor adjustments can lead to significant improvements.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Conclusion

These experimental models present exciting opportunities to enhance model stability, although results shouldn’t be expected to be extraordinary right away. By understanding and applying these principles, you can make the most of what mm-Stabilized_mid and mm-Stabilized_high offer.

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.