Welcome to the enthralling world of AI models! In this blog post, we will delve into the LayoutLMv2-finetuned-cord model, a powerful tool designed to facilitate various document understanding tasks. Let’s guide you on how to use this model effectively, while ensuring a user-friendly and informative journey.

What is LayoutLMv2-Finetuned-CORD?

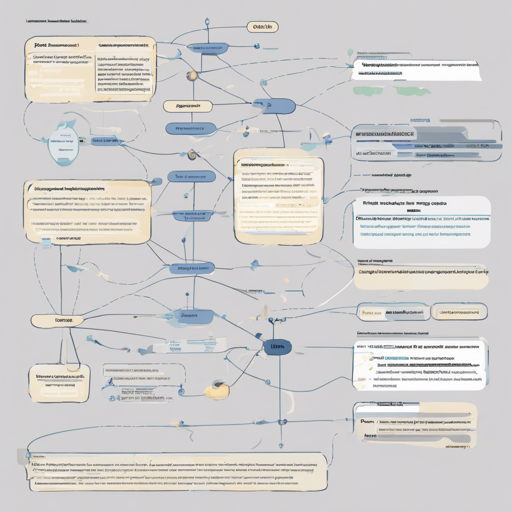

The LayoutLMv2-finetuned-cord model is a specialized adaptation of the base LayoutLMv2 model developed by Microsoft. This model excels in understanding and processing the textual and visual aspects of documents, but it’s essential to note that it has been fine-tuned on an unspecified dataset, meaning additional insights into its capabilities may be necessary.

How to Use the LayoutLMv2-Finetuned-CORD Model

Using this model effectively can be likened to navigating a well-structured library. Imagine that each section of the library represents a specific document skill—like extracting text or understanding context. The fine-tuned LayoutLMv2 acts as your librarian, equipped with the knowledge to help you locate the right information quickly and efficiently.

Step-by-Step Guide

- Step 1: Install the Required Libraries

Before venturing into the world of LayoutLMv2, ensure that you have the necessary libraries to support your journey:

pip install transformers torch datasets tokenizers - Step 2: Load the Model

Once your libraries are in place, load the model using the Transformers library:

from transformers import LayoutLMv2Processor, LayoutLMv2ForTokenClassification processor = LayoutLMv2Processor.from_pretrained("microsoft/layoutlmv2-base-uncased") model = LayoutLMv2ForTokenClassification.from_pretrained("your-model-directory") - Step 3: Prepare Your Documents

Each document should be preprocessed according to the model’s requirements. Think about how you would organize the library books before showcasing them.

- Step 4: Make Predictions

Utilize the model to make predictions based on the documents you’ve prepared. This step may feel like consulting with the librarian about specific topics of interest.

Training Hyperparameters

Understanding the training parameters of the model can help you gauge its performance:

- Learning Rate: 5e-05

- Train Batch Size: 8

- Eval Batch Size: 8

- Seed: 42

- Optimizer: Adam with betas=(0.9, 0.999) and epsilon=1e-08

- Learning Rate Scheduler Type: Linear

- Warmup Ratio: 0.1

- Training Steps: 15

Troubleshooting Tips

Just like a library occasionally faces challenges, so might you while using this model. Here are some troubleshooting tips:

- Model Loading Issues: If you face problems loading the model, ensure your libraries are up-to-date and verify the model directory is correct.

- Slow Performance: For sluggish performance, you may want to adjust the batch sizes or check your hardware capabilities.

- Unexpected Outputs: If the model’s predictions don’t match your expectations, consider refining your input data or reviewing the preprocessing steps.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Conclusion

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.

Final Thoughts

Remember, using the LayoutLMv2-finetuned-cord model effectively can enhance your ability to extract and understand document-related information. Approach it like a library, where organization and clarity lead to optimal outcomes! Happy modeling!