Are you curious about how to create a paper title generator using the revolutionary Transformer architecture? Look no further! In this guide, we will walk you through the process of building a simple yet powerful model designed for generating titles for computer science papers based on their abstracts. We’ll ensure the instructions are straightforward, user-friendly, and equipped with troubleshooting tips to help you along the way.

Understanding the Transformer Architecture

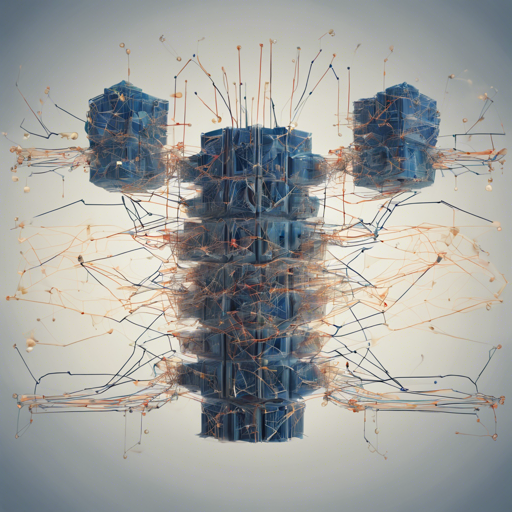

The Transformer model has taken the world of natural language processing (NLP) by storm. Imagine it as a high-speed train that’s designed exclusively for traversing information. Unlike traditional models that frequently stop at various stations (recurrence) or rely on complex tracks (convolutions), the Transformer travels directly from the starting point (the encoder) to the destination (the decoder) using its streamlined, efficient attention mechanisms.

- Encoder: Takes in the initial data (the abstract of the paper).

- Attention Mechanisms: Helps the model focus on relevant sections of the abstract to predict a suitable title.

- Decoder: Generates the title based on the processed information, ensuring high accuracy and relevance.

Implementation Steps

Now let’s break down the steps to create your own paper title generation model.

- Data Collection: Gather abstracts from computer science papers. You can use datasets like arXiv.org that contain a wealth of relevant information.

- Model Selection: Utilize a BERT2BERT Encoder-Decoder model, leveraging the official bert-base-uncased checkpoint for initialization.

- Fine-Tuning: Train your model using a large dataset. In our case, we fine-tuned it on 318,500 computer science papers, achieving a 26.3% Rouge2 F1-Score on validation data.

- Testing: Validate your model on held-out data to ensure its title generation capabilities are up to par.

- Deployment: Once your model is fine-tuned, it’s time to launch the live demo. Check it out here: Live Demo.

Troubleshooting Tips

Even the best models can encounter bumps along the journey. Here are some troubleshooting ideas to help you smooth out any issues that might arise:

- Model Performance: If the generated titles aren’t relevant, consider fine-tuning the model on a more curated dataset or experimenting with different hyperparameters.

- Training Time: If training seems slow, ensure that you’re using a properly configured multi-GPU setup – the Transformer architecture is designed to be parallelizable for efficiency.

- Data Requirements: If you notice difficulties with limited training data, supplement your dataset with other relevant resources or use techniques like transfer learning.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Conclusion

In summary, the Transformer architecture not only redefines the approach to title generation for academic papers but does so with remarkable efficiency. By following the steps outlined above, you can create a model capable of producing high-quality titles from abstracts in no time.

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.