In this article, we will explore how to utilize the Swin Transformer model for image classification tasks through a step-by-step guide. The Swin Transformer is an efficient and versatile model specifically designed for image-related tasks, building hierarchical feature maps that allow it to process images with remarkable accuracy even at larger scales. Let’s dive in!

What is the Swin Transformer?

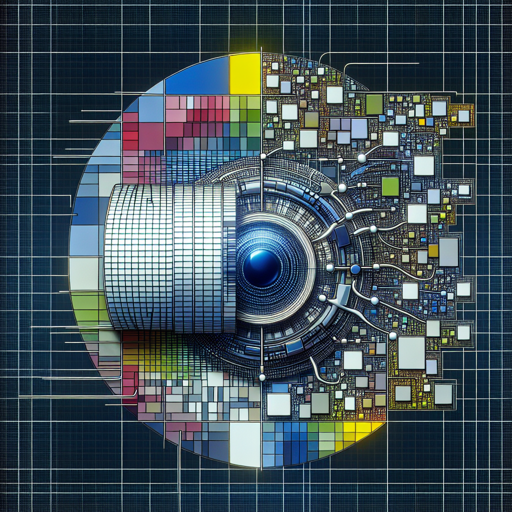

The Swin Transformer, or Shifted Window Transformer, is a groundbreaking model that revolutionizes how we approach image classification. Imagine you are sifting through a large collection of images – instead of staring at each image in its entirety (which would be burdensome), the Swin Transformer focuses on local portions of the image to identify key features. By only looking at parts of the image that matter most, it achieves remarkable efficiency and efficacy.

In simpler terms, think of the Swin Transformer as a master detective who initially examines small clues (image patches) and gradually pieces together the bigger picture without getting bogged down by unnecessary details.

Before You Start

Before using the Swin Transformer model, ensure you have the necessary libraries installed. You will need:

- transformers

- PIL (Pillow)

- requests

Step-by-Step Guide to Use Swin Transformer

Here’s how you can classify an image from the COCO 2017 dataset into one of the 1,000 ImageNet classes using Python:

python

from transformers import AutoFeatureExtractor, SwinForImageClassification

from PIL import Image

import requests

# Load an image from the COCO dataset

url = "http://images.cocodataset.org/val2017/000000000039.jpg"

image = Image.open(requests.get(url, stream=True).raw)

# Load the model and feature extractor

feature_extractor = AutoFeatureExtractor.from_pretrained("microsoft/swin-base-patch4-window7-224")

model = SwinForImageClassification.from_pretrained("microsoft/swin-base-patch4-window7-224")

# Prepare the image for the model

inputs = feature_extractor(images=image, return_tensors="pt")

# Get model predictions

outputs = model(**inputs)

logits = outputs.logits

# Model predicts one of the 1000 ImageNet classes

predicted_class_idx = logits.argmax(-1).item()

print("Predicted class:", model.config.id2label[predicted_class_idx])This code retrieves an image, processes it using the Swin Transformer’s feature extractor, and then classifies it to determine which of the 1,000 ImageNet classes it belongs to.

Troubleshooting Tips

If you encounter issues while implementing the Swin Transformer, here are some troubleshooting suggestions:

- Ensure your internet connection is stable when loading models and images from URLs.

- Check that all necessary libraries are correctly installed and up-to-date.

- If you receive a “model not found” error, confirm that you typed the model name correctly when loading it.

- In case of unexpected outputs, verify that the image format is supported by PIL (like JPG or PNG).

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Conclusion

The Swin Transformer is an impressive tool for image classification, providing a more sophisticated and efficient approach to processing visual data. By breaking down complex visual information into manageable pieces, it offers a robust backbone for various image-based tasks.

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.