In the realm of Natural Language Processing (NLP), models like DistilBERT revolutionize how we approach tasks such as zero-shot classification. This blog post will walk you through converting the DistilBERT model fine-tuned on the Multi-Genre Natural Language Inference (MNLI) dataset, which is designed to work seamlessly in situations requiring an understanding of context without needing retraining.

Understanding the Model

The model, known for its uncased capability, treats ‘english’ and ‘English’ the same. Think of it as a smart librarian who, regardless of book covers or titles, always finds the right content based on your queries. This similarity treatment makes the model extremely useful in diverse applications, especially for tasks involving zero-shot classification.

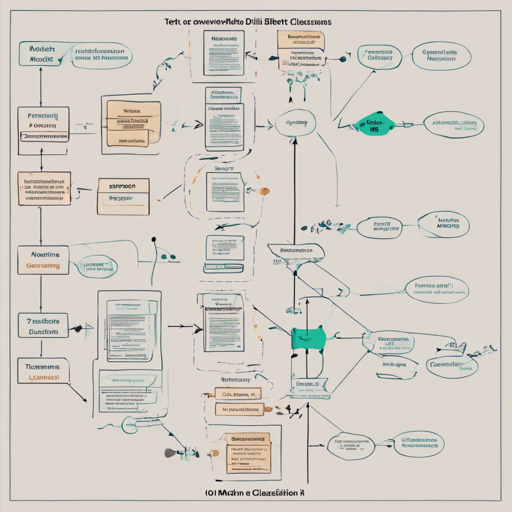

Steps to Convert the DistilBERT Model

Here’s a straightforward guide to converting the DistilBERT model:

- Step 1: Ensure you have access to the appropriate libraries and dependencies.

- Step 2: Use an AWS EC2 instance, specifically the p3.2xlarge instance, which provides ample computational power for model training.

- Step 3: Input the necessary hyperparameters needed for training the DistilBERT model. Here’s a sample command:

run_glue.py --model_name_or_path distilbert-base-uncased --task_name mnli --do_train --do_eval --max_seq_length 128 --per_device_train_batch_size 16 --learning_rate 2e-5 --num_train_epochs 5 --output_dir tmp/distilbert-base-uncased_mnliEvaluation of the Model

After training the model, it’s time to evaluate its performance. The evaluation results from the MNLI task typically yield an accuracy of about 82.0%, which indicates the model’s reliability and effectiveness in understanding contextual nuances in text.

Troubleshooting Tips

If you encounter issues during the conversion or training process, consider the following troubleshooting steps:

- Check Dependencies: Ensure all required libraries and frameworks are correctly installed.

- Adjust Hyperparameters: If you receive errors or poor evaluation results, tweaking hyperparameters like batch size or learning rate may help.

- Monitor System Resources: Make sure your AWS instance has enough resources available during training; resource strain can lead to failed operations.

- Logging: Review the logs generated during training to pinpoint where issues may arise.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Conclusion

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.