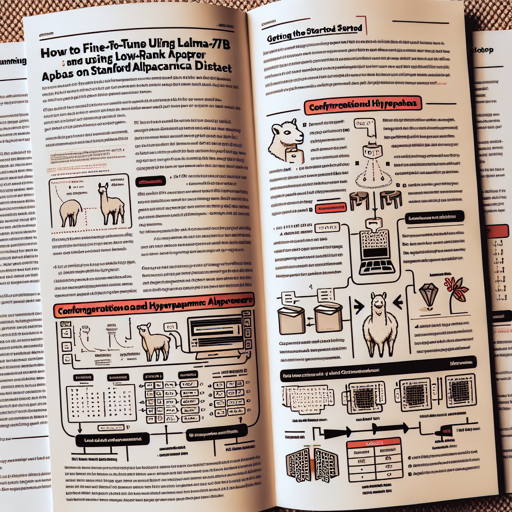

In today’s blog, we’ll guide you through the process of fine-tuning the LLaMA-7B model utilizing low-rank adapters, specifically trained on the Stanford Alpaca dataset. This low-rank approach allows us to efficiently adapt a pre-trained model to new tasks with minimal computational overhead. Let’s dive into this exciting process!

Getting Started

Before you get started, ensure you have the necessary dependencies and a suitable environment to run the training process. The required libraries and software can typically be found in the official documentation.

Configuration and Hyperparameters

Here are the key hyperparameters you’ll be using to fine-tune the model:

- Epochs: 10 (load from the best epoch)

- Batch size: 128

- Cutoff length: 512

- Learning rate: 3e-4

- Lora r: 16

- Lora target modules: q_proj, k_proj, v_proj, o_proj

Running the Fine-Tuning Process

Now, it’s time to fine-tune the model. You will use the following command:

python finetune.py \

--base_model=decapoda-research/llama-7b-hf \

--num_epochs=10 \

--cutoff_len=512 \

--group_by_length \

--output_dir=.lora-alpaca-512-qkvo \

--lora_target_modules=[q_proj,k_proj,v_proj,o_proj] \

--lora_r=16 \

--micro_batch_size=8This command is like preparing a soufflé: just like measuring the perfect amount of ingredients is crucial for a fluffy outcome, setting the right parameters is essential for an optimal model performance. Each parameter plays a specific role in determining how well the model learns from the data.

Troubleshooting Tips

If you encounter issues during the fine-tuning process, consider the following troubleshooting strategies:

- Python Errors: Ensure that your Python environment has all the necessary packages installed.

- Memory Issues: If your GPU runs out of memory, try reducing the micro_batch_size parameter.

- Model Not Learning: Double-check the learning rate; sometimes, adjusting this can vastly improve performance.

- Output Directory Problems: Ensure you have write permissions for the directory specified in –output_dir.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Conclusion

We have walked you through the essential steps to fine-tune LLaMA-7B using low-rank adapters on the Stanford Alpaca dataset. With practice, you’ll master this process in no time!

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.