Are you interested in creating your own speech synthesis projects using advanced technologies? Meet CRUST, a revolutionary multispeaker model that stands out by synthesizing speech using as little as one minute of training data. In this article, we will explore how CRUST works, its training process, and important troubleshooting tips to maximize its utility.

What is CRUST?

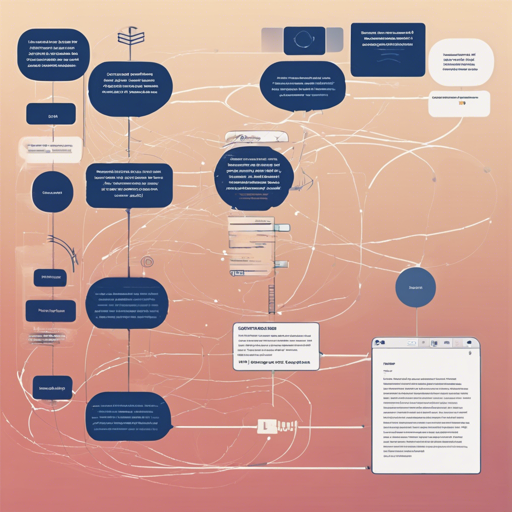

CRUST is a 168 speaker model utilizing the Uberduck pipeline to deliver high-quality speech synthesis. If you’ve struggled with training models on large datasets, CRUST can be a game changer due to its ability to generate remarkably good results even with minimal data by leveraging a variety of voices.

The Mechanics Behind a Multispeaker Model

You might wonder, what makes a multispeaker model so unique? Think of it like a team of chefs who cook a fantastic dish together. Instead of relying on one chef’s recipe (or voice), CRUST utilizes the collective experience from multiple chefs (or speakers). By generating an average voice from all writers and then fine-tuning each speaker on that average, CRUST effectively learns from diverse speech inputs, making it ideal for capturing different accents, genders, and pitches.

Training with Minimal Data

- Training Data: CRUST has been trained on roughly 20 hours of data from 168 different speakers—more than 19,800 audio files!

- Efficiency: This diverse training allows the model to hone in on specific voice characteristics even with just one minute of training data.

This capability arises because CRUST already has a broad understanding of speech characteristics, akin to knowing various aspects of a language even if you haven’t practiced with all possible dialogues.

Consider the Downsides

While CRUST presents numerous advantages, here are some considerations:

- Training Time: Although training can be shorter than with models requiring larger datasets, it still takes considerable time.

- Dataset Quality: Ensure your datasets are clean, free from background noise, or music as this adversely affects training efficacy.

- Sufficient Data: More training data yields better results, despite the model’s efficiency with one minute.

- Audio Quality: The model is limited to 22050 Hz and mono audio files, which may not be suitable for all applications.

Troubleshooting CRUST Issues

Sometimes, you may encounter issues while working with CRUST. Here are a few troubleshooting ideas:

- If your synthesized audio doesn’t sound realistic, try increasing the training time and ensuring a clean dataset.

- For unexpected artifacts in your audio files, check the quality of your input data and consider using tools that can enhance audio clarity.

- Remember, if the model’s outputs seem off-base, you may need a larger dataset to capture a broader range of phonetic and syllabic variations.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Summary

CRUST offers a fascinating entry into the world of speech synthesis with its ability to operate efficiently and effectively with minimal data. However, it is essential to understand the importance of dataset quality and apply good training practices to ensure the best outcomes.

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.