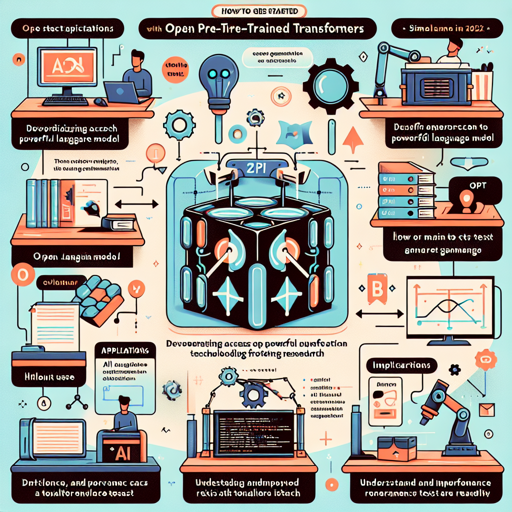

If you’ve ever wished to dive into the fascinating world of AI language models, then the Open Pre-trained Transformers (OPT) family by Meta AI is the place to start. Launched on May 3, 2022, this suite of models aims to democratize access to powerful text generation technology and foster research in this field.

Getting Acquainted with OPT

The OPT series includes models ranging from 125M to 175B parameters, closely mirroring the performance of the popular GPT-3 models. They are designed for text generation and evaluation tasks, allowing researchers to better understand and improve challenges related to bias, toxicity, and robustness.

How to Use OPT

To utilize large OPT models effectively, it’s essential to follow a specific method for optimal performance. Here’s a step-by-step guide:

- Install Necessary Libraries: Make sure you have Python and the necessary packages installed. You can use pip to install the Hugging Face Transformers library.

- Load the Model: For large models, using half-precision can enhance speed and reduce memory consumption. Here is a simple way to set it up:

from transformers import AutoModelForCausalLM, AutoTokenizer

import torch

# Load the model

model = AutoModelForCausalLM.from_pretrained('facebook/opt-6.7b', torch_dtype=torch.float16).cuda()

# Load the tokenizer

tokenizer = AutoTokenizer.from_pretrained('facebook/opt-6.7b', use_fast=False)

# Create a prompt

prompt = "Hello, I'm conscious and aware of my surroundings."

input_ids = tokenizer(prompt, return_tensors='pt').input_ids.cuda()

# Generate text

generated_ids = model.generate(input_ids)

output = tokenizer.batch_decode(generated_ids, skip_special_tokens=True)

print(output)This code is like unlocking a door. By using the right key (in this case, the prompt), you allow the OPT model to generate intelligent replies. It transforms your input text into a meaningful response, just as a good door opens to a well-kept room filled with light.

Exploring Sampling Methodology

By default, the generation outputs may seem a bit repetitive as they’re deterministic. However, you can introduce some randomness for more varied outputs through top-k sampling. You can accomplish this with a slight alteration in your code:

from transformers import set_seed

# Set a seed for reproducibility

set_seed(32)

# Generate text with sampling

generated_ids = model.generate(input_ids, do_sample=True)

output = tokenizer.batch_decode(generated_ids, skip_special_tokens=True)

print(output)In this analogy, think of top-k sampling as adding a dash of hot sauce to a recipe—while the basic dish (deterministic generation) is present, the hot sauce (sampling) transforms it into a more vibrant experience.

Troubleshooting Tips

As you embark on your journey with OPT, you may encounter some bumps along the way. Here are a few troubleshooting ideas:

- Ensure that your GPU memory is sufficient for high-performance models; you might need to use smaller models for experimenting.

- If the output seems biased or nonsensical, check your input prompts and consider adjusting them for clarity.

- Keep your libraries and model parameters up to date for optimal functionality and compatibility.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Limitations and Bias in OPT Models

It’s essential to note that because OPT models were trained on a large corpus of unfiltered internet text, they may reflect various biases inherent in that data, which can lead to issues of quality and safety during generation. For example, the model may produce stereotypical job titles based on gender prompts. Hence, caution should be exercised when deploying these models in sensitive applications.

Wrap-Up

With the OPT models, you have an opportunity to explore and contribute to AI development responsibly and effectively. The architecture and methodologies behind these models facilitate groundbreaking research and innovative applications. If you want to learn more about the technologies and stay ahead in the AI field, join us in our quest for knowledge.

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.