If you’re venturing into the realm of Natural Language Processing (NLP) and specifically looking to harness the power of NER using a language model, you’ve come to the right place. In this guide, we will navigate through the implementation of the Roberta-Base model for NER tasks utilizing the MIT Movie dataset.

What is the Roberta-Base Model?

Roberta-Base is a state-of-the-art transformer-based model designed for various NLP tasks. It excels at understanding the nuances in language, making it a perfect candidate for named entity recognition which involves identifying and classifying key information from text into predefined categories.

Setting Up Your Environment

- Ensure you have the required infrastructure, preferably using high-performance GPUs such as the Tesla V100.

- Install necessary libraries including Hugging Face Transformers.

Implementing the Roberta-Base NER Pipeline

The objective is to prepare the Roberta-Base model for NER tasks. Here’s how you can do that:

model_name = "thatdramebaazguyroberta-base-MITmovie"

pipeline(model=model_name, tokenizer=model_name, revision="v1.0", task="ner")

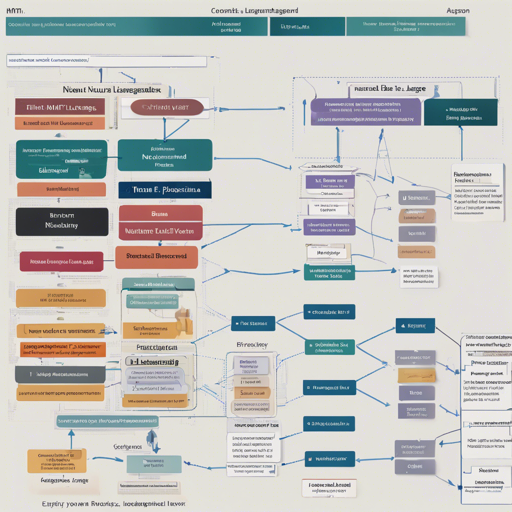

Understanding the Training Configuration

Here’s a quick analogy to understand the components involved:

Imagine you’re training a chef (the model) to cook a special dish (the NER task) with a unique recipe (the MIT Movie Dataset). The following ingredients are crucial for this recipe:

- Num examples: 6253 – This represents the variety of ingredients (data points) you’re using.

- Num Epochs: 5 – This is how many times you’ll have the chef practice to perfect the dish.

- Batch size: 64 – This indicates how many dishes the chef will prepare at once before refreshing their preparation skills (updating weights).

Performance Metrics

Evaluating our chef’s (model’s) performance after training involves several key metrics:

- Eval Accuracy: 0.9476 – How accurately the chef identifies ingredients.

- Eval F1 Score: 0.8853 – The harmonic mean of precision and recall, indicating balance in our chef’s skill.

- Eval Loss: 0.2208 – The level of errors made in preparation, with lower values being better.

Troubleshooting

In case you encounter issues during your implementation, here are a few troubleshooting tips:

- Check for consistent library versions, ensuring compatibility.

- If your model is running slowly, try reducing the batch size or optimizing your GPU usage.

- Review the dataset for any inconsistencies or anomalies that might cause errors during training.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Conclusion

By following the steps outlined above, you now have a solid framework to implement the Roberta-Base model for NER tasks on the MIT Movie Dataset successfully. At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.

Happy coding!