Welcome to the world of advanced artificial intelligence, where Stacked Generative Adversarial Networks (SGAN) take center stage! This powerful architecture, introduced in the CVPR 2017 paper, allows for the generation of incredibly realistic images. Follow this guide to implement SGAN effectively.

What is SGAN?

SGAN is an improved version of traditional Generative Adversarial Networks (GANs), specifically designed to enhance the generation quality of images. It utilizes a stacked architecture to generate images progressively, refining the output at each level.

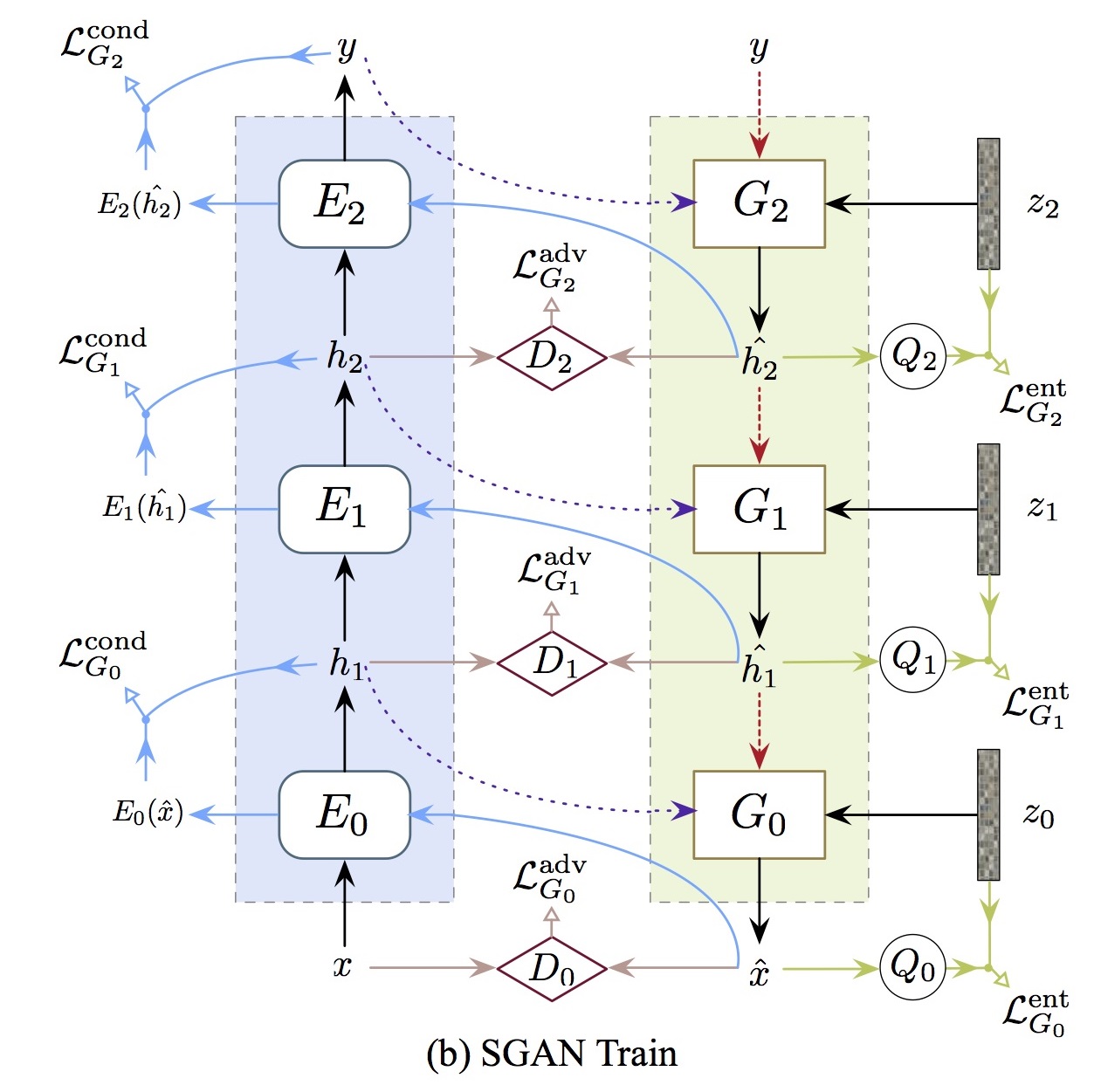

Architecture Overview

To visualize the architecture of SGAN, imagine a multi-layer cake. Each layer adds more detail and flavor to the cake, resembling how each stacked GAN layer contributes to the final image quality.

Here’s a quick representation of the SGAN architecture:

Getting Started with SGAN

To get started, you’ll need to set up your environment and follow these steps:

- Clone the repository for SGAN.

- Install the necessary dependencies, such as TensorFlow or PyTorch.

- Download the dataset you’d like to use. Commonly used datasets include MNIST, SVHN, and CIFAR-10.

- Run the training script provided within the repository.

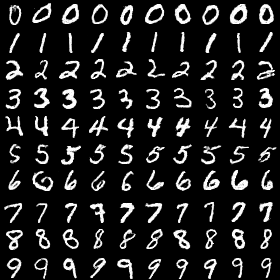

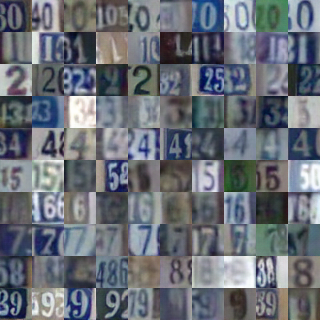

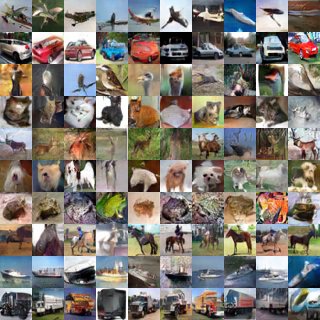

Sample Outputs

Here’s what you can expect from SGAN in terms of sample outputs:

Performance Comparison

SGAN proudly stands out in performance on the CIFAR-10 dataset, outperforming many other methods:

| Method | Inception Score |

|---|---|

| Infusion training | 4.62 ± 0.06 |

| GMAN (best variant) | 5.34 ± 0.05 |

| LR-GAN | 6.11 ± 0.06 |

| EGAN-Ent-VI | 7.07 ± 0.10 |

| Denoising feature matching | 7.72 ± 0.13 |

| DCGAN | 6.58 |

| SteinGAN | 6.35 |

| Improved GAN (best variant) | 8.09 ± 0.07 |

| AC-GAN | 8.25 ± 0.07 |

| SGAN (ours) | 8.59 ± 0.12 |

Troubleshooting Common Issues

Even with a powerful framework like SGAN, you might encounter some hiccups along the way. Here’s how to tackle common issues:

- Training Stalls or Fails: Ensure that your hardware meets the required specifications. Insufficient memory can cause training to stall.

- Low-Quality Outputs: Adjust hyperparameters such as learning rates or batch sizes. Be patient; training GANs often takes time to yield good results.

- Performance Issues: Monitor system usage. If the CPU or GPU is under heavy load, consider optimizing your code or using a more capable machine.

- For personalized assistance, don’t hesitate to reach out!

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Conclusion

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.