The LLaMA (Large Language Model Meta AI) is a sophisticated auto-regressive language model developed by the FAIR team at Meta AI. This guide will walk you through how to convert and utilize the LLaMA-7B model with Hugging Face’s Transformers library, including troubleshooting tips and insights on ethical considerations. Let’s dive in!

Understanding the LLaMA Model

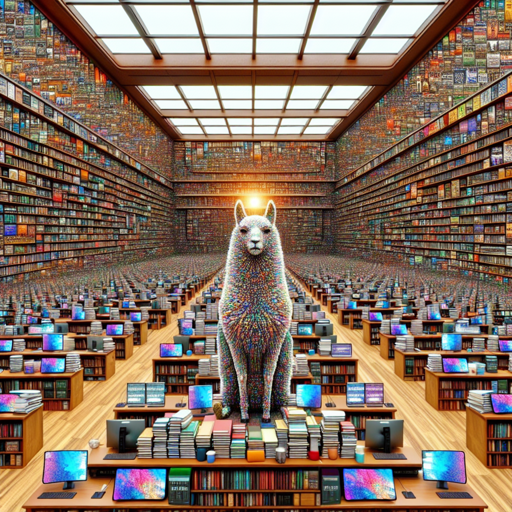

Before jumping into the practical steps, let’s break down the model itself. Imagine LLaMA as a vast library filled with various books (data sources) encompassing different languages and topics (knowledge). Each time you want to find information, imagine you’re using a library assistant (the model) to guide you toward the right section and retrieve the appropriate book. The more organized and filled with information this library is, the better the assistant (LLaMA) can help you!

Step-by-Step Instructions to Use LLaMA-7B with Transformers

- Installation: First, ensure you have the necessary libraries installed. You can do this via pip:

pip install transformers torchfrom transformers import LLaMAForCausalLM, LLaMATokenizermodel = LLaMAForCausalLM.from_pretrained("model/LLaMA-7B")

tokenizer = LLaMATokenizer.from_pretrained("model/LLaMA-7B")input_text = "Artificial intelligence has the potential to"

input_ids = tokenizer.encode(input_text, return_tensors='pt')

output = model.generate(input_ids, max_length=50)decoded_output = tokenizer.decode(output[0], skip_special_tokens=True)

print(decoded_output)Troubleshooting Tips

If you encounter issues while using the LLaMA-7B model, consider the following troubleshooting ideas:

- Installation Issues: Ensure that both the Transformers and Torch libraries are installed correctly. Updating them might resolve compatibility issues.

- Model Not Found: Confirm that the model path is correctly specified. A typo in the model name can result in loading errors.

- Error Messages: Take note of error messages while running your scripts; they often provide clues on what might have gone wrong.

- Performance Problems: If the model is slow, check the system resources. LLaMA requires significant computational power.

- Generating Toxic Content: LLaMA may produce biased or harmful text due to its training data. Assess the outputs critically and apply necessary risk mitigation.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Ethical Considerations

Using models like LLaMA involves ethical implications. The dataset it was trained on likely includes biases and offensive content; hence, carefully evaluate its outputs before application, particularly in sensitive areas. The potential for misinformation or harmful content production must be addressed to ensure responsible AI usage.

Conclusion

In conclusion, leveraging the LLaMA-7B model with Hugging Face’s Transformers opens up a plethora of possibilities in AI research and applications. As you proceed, remember the importance of evaluating biases within the model and its outputs critically.

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.