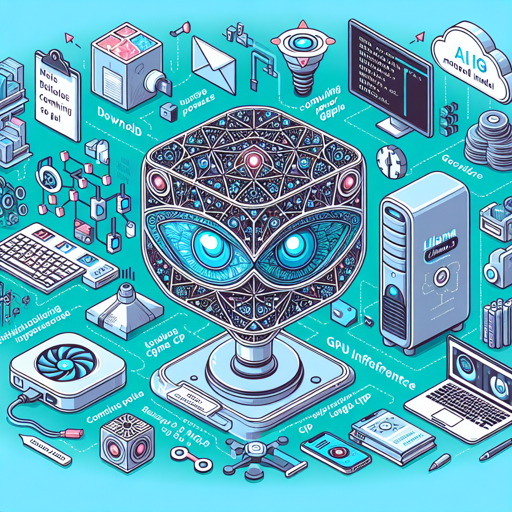

Welcome to the exciting world of AI model inference! In this blog post, we’ll explore how to effectively run the Metas LLaMA 30b GGML model files. With the right configuration and understanding, you can utilize these powerful files to achieve stunning results in your AI projects.

Getting Started with Metas LLaMA 30b GGML

The Metas LLaMA 30b GGML files are designed for CPU and GPU inference, supporting a variety of user interfaces (UIs) and libraries. This versatility allows you to pick the right tool for your specific needs. Here’s a guide on how you can get everything set up properly.

Step-by-Step Instructions

- Download Required Files: Begin by obtaining the GGML model files from the official repository.

- Setup llama.cpp: Ensure that you have the llama.cpp library installed to manage GPU and CPU interactions.

- Run the Model: Use the command line to execute your model with the right parameters. Here’s a sample command:

.main -t 10 -ngl 32 -m llama-30b.ggmlv3.q4_0.bin --color -c 2048 --temp 0.7 --repeat_penalty 1.1 -n -1 -p ### Instruction: Write a story about llamas- -t specifies the number of CPU cores you will utilize. Set this according to your system capabilities.

- -ngl allows you to offload layers to the GPU. If you don’t have a GPU, this can be omitted.

- If you’re interested in a conversational model, replace the -p prompt argument with -i.

Understanding the Code: An Analogy

Imagine you’re organizing a concert, and you need to allocate tasks to your team. Each team member has specific roles like setting up the stage, managing lighting, or selling tickets. Your command-line structure is like the concert setup, where:

- -t 10 is like assigning 10 team members to various tasks.

- -ngl 32 is akin to deciding how many resources (like sound equipment) you want to offload to a different team.

- The various flags and parameters (like –temp and –repeat_penalty) are your guidelines for how lively or structured you want the concert to be.

By orchestrating these elements efficiently, just like a concert, you can ensure your AI model performs optimally!

Troubleshooting Tips

If you face any issues running the model, try the following troubleshooting steps:

- Check Your Commands: Ensure that your command syntax matches what’s required. A single typo can lead to errors!

- Resource Management: Make sure your system has sufficient RAM and GPU resources as mentioned in the model specifications.

- Review Compatibility: Validate that you are using compatible versions of libraries and tools. Each update might change compatibility.

- Community Support: For additional assistance, consider joining the TheBloke AI Discord server where you can discuss with fellow users.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Why Use the Metas LLaMA Model?

This model is excellent for generating stories, dialogues, and other creative text outputs. Its versatile architecture allows usage across different inference setups, guaranteeing robust performance whether on your local machine or via cloud systems.

Conclusion

By following these steps, you should now be equipped to run the Metas LLaMA 30b GGML model on your machine. Remember, experimentation is key to mastering AI techniques. Dive into your projects and explore what fascinating outputs you can create!

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.