If you’re diving into the exciting world of RWKV—an RNN-style model that boasts transformer-level performance—you’re in for an intellectual treat. In this guide, we will walk through the steps to test, train, and fine-tune the RWKV-5 and RWKV-6 models. We’ll also address some common troubleshooting tips to make your journey as seamless as possible.

Getting Started with RWKV Models

Before we get started on the RWKV training process, ensure you have the necessary environment set up. Make sure you’re using Python 3.10, Torch 2.3.1+cu121 (or the latest), CUDA 12.5+, and the latest version of DeepSpeed. For PyTorch Lightning, it’s essential to stick to version 1.9.5 to avoid potential pitfalls.

Testing RWKV-5 on MiniPile

To test RWKV-5 on MiniPile, follow these straightforward steps:

- Navigate to your terminal and execute:

pip install torch --upgrade --extra-index-url https://download.pytorch.org/whl/cu121 - Install the required packages:

pip install pytorch-lightning==1.9.5 deepspeed wandb ninja --upgrade - Run the demo scripts:

cd RWKV-v5 && ./demo-training-prepare.sh && ./demo-training-run.sh

Your loss curve should look almost identical to a reference curve provided, which reflects successful training.

Training RWKV-6

To train RWKV-6, simply add a testing flag in the demo-training commands:

./demo-training-prepare.sh --my_testing x060./demo-training-run.sh --my_testing x060

Fine-tuning RWKV-5 Models

Fine-tuning allows the RWKV models to adapt precisely to your datasets. To get started:

- Convert your data to the .jsonl format. Check here for specifications.

- Run the following command to tokenize your data:

python make_data.py --input your-file.jsonl --output train.np - Rename the checkpoint in your model folder to

rwkv-init.pthfor training. - Execute the training command with appropriate hyperparameters:

python train.py --n_layer 32 --n_embd 4096 --vocab_size 65536 --lr_init 1e-5 --lr_final 1e-5

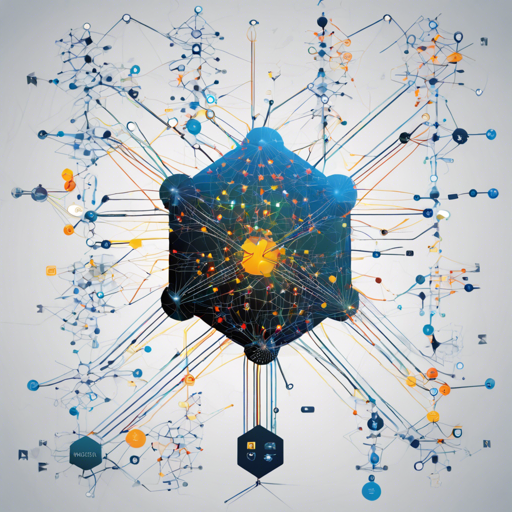

Understanding the RWKV Code with an Analogy

Think of RWKV as a well-organized library where each section holds the knowledge of different topics. The books represent the data, and the librarian symbolizes the model that helps us fetch and organize that knowledge efficiently.

In terms of functionality:

- The information stored in books (data tokens) flows to the librarian (model) who continuously updates the sections.

- Contexts have an alignment within the library’s framework; the librarian ensures that the right books (tokens) are accessed at the right times instead of being scattered.

As new books enter the library (new tokens), the librarian (model) integrates this information without losing what was previously stored, ensuring a comprehensive knowledge base (state) is always available.

Common Troubleshooting Tips

If you encounter any issues or inconsistencies while training or running your models, consider the following:

- Ensure you have the right versions of all dependencies as mentioned at the beginning of this guide.

- If your loss curve looks erratic, consider adjusting your learning rate and batch size.

- Check for any potential errors in the data input format, especially during fine-tuning.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Conclusion

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.