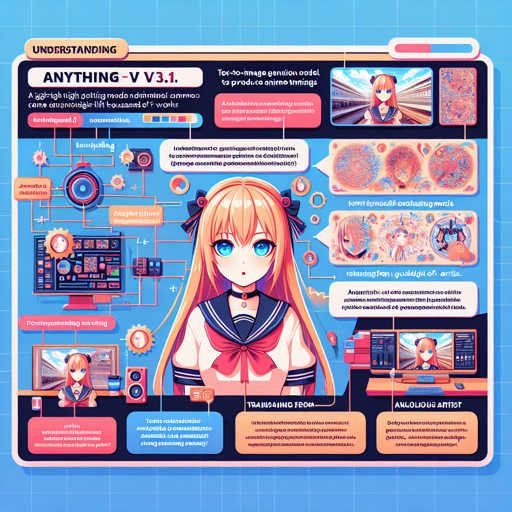

Welcome to the colorful world of Anything V3.1! This diffusion-based text-to-image generation model allows you to create beautiful anime-themed images from simple text prompts. In this blog, we will walk you through the steps to effectively use Anything V3.1, explore its fascinating features, and troubleshoot common issues you might encounter along the way. Let’s dive in!

Understanding Anything V3.1

Imagine Anything V3.1 as a sophisticated artist. This artist has learned from thousands of artworks, making them excellent at transforming written descriptions into vivid images. Just like a painter requires specific instructions to capture the right scene on canvas, Anything V3.1 needs well-crafted text prompts to generate high-quality visuals. With the right blend of artistic tags and prompt details, you can achieve remarkable results.

How to Use Anything V3.1

Download the model:

Adjust your text prompts:

- Begin your prompts with artistic tags such as masterpiece or best quality to enhance the aesthetic output.

- Consider adding negative prompts like lowres, bad anatomy, or blurry to avoid common issues in image generation.

Run the pipeline:

- First, ensure the following dependencies are installed:

bash pip install diffusers transformers accelerate scipy safetensors - Next, use this Python script to generate your images:

python import torch from torch import autocast from diffusers import StableDiffusionPipeline, DPMSolverMultistepScheduler model_id = "caganything-v3-1" pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16) pipe.scheduler = DPMSolverMultistepScheduler.from_config(pipe.scheduler.config) pipe = pipe.to("cuda") prompt = "masterpiece, best quality, high quality, 1girl, solo, sitting, confident expression, long blonde hair, blue eyes, formal dress" negative_prompt = "lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry" with autocast("cuda"): image = pipe(prompt, negative_prompt=negative_prompt, width=512, height=728, guidance_scale=12, num_inference_steps=50).images[0] image.save("anime_girl.png")- First, ensure the following dependencies are installed:

Limitations to Note

While Anything V3.1 is a powerful tool, it does come with limitations. Its training has made it specialized for generating feminine characters, making it challenging to create masculine representations without highly specific prompts. Furthermore, this model has shown some laziness in prompting, often requiring the phrase “1girl” to yield acceptable results.

Troubleshooting Common Issues

Here are some troubleshooting steps to help you navigate any roadblocks while using Anything V3.1:

- If you’re not achieving the desired image quality, revisit your prompts. Ensure you are incorporating high-aesthetic tags and valid negative prompts.

- Make sure your dependencies are installed correctly; run install commands again if necessary.

- If encountering CUDA-related errors, ensure that your environment supports CUDA and that the GPU is properly configured.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Model License

Anything V3.1 is available under the CreativeML Open RAIL++-M License, allowing users to create and share beautiful artwork freely as long as they adhere to the usage guidelines.

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.