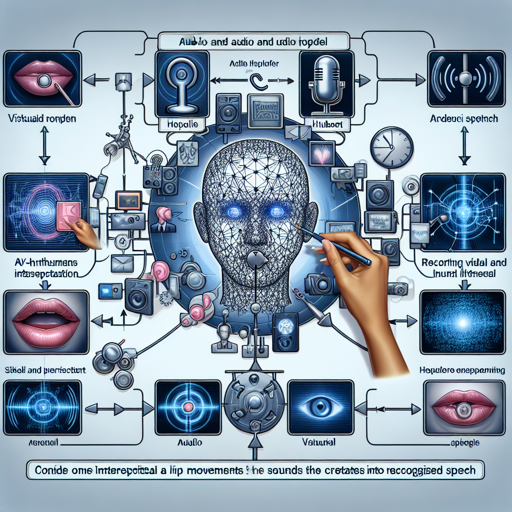

If you’re venturing into the world of audio-visual speech representation, you’re in for an exciting ride. AV-HuBERT, which stands for Audio-Visual Hidden Unit BERT, is a self-supervised representation learning framework that utilizes both audio and visual (lip movements) information for improved speech recognition. This blog will guide you through the process of leveraging AV-HuBERT for automatic speech recognition with ease and offer troubleshooting tips along the way.

Getting Started with AV-HuBERT

AV-HuBERT makes excellent use of video recordings where audio and visual cues are correlated, providing a robust signal for learning speech representations. Think of it as a skilled interpreter—the model observes both the speaker’s lip movements and the sounds they produce to grasp the entirety of the spoken word.

How AV-HuBERT Works: An Analogy

Imagine training a child to recognize fruits. You show them an apple while you say “apple.” Over time, the child learns that the round, red object with a stem called an “apple” not only looks a certain way but also sounds like “apple.” In a similar manner, AV-HuBERT uses both audio (the sound of speech) and visual cues (the lip movements) from the speaker to learn and predict what is being said. The model cleverly masks different parts of the video input, searching for hidden units it can correlate with the audio, hence refining its understanding iteratively.

Setting Up AV-HuBERT

- Clone the official code repository from GitHub.

- Ensure you have the required dependencies installed. Check the README file for the specific library versions.

- Prepare your dataset for processing. AV-HuBERT was trained on LRS3 and VoxCeleb2, so ensure your data aligns if you’re looking to replicate results.

- Run the model on your dataset using the provided scripts, and observe the results.

Example Use Case

Upon sending your audio-visual inputs through AV-HuBERT, you can seamlessly achieve effective speech recognition. The model utilizes the visual lip movements captured in video recordings to enhance the accuracy of transcribed text, providing a truly innovative way to bridge audio and visual data.

Troubleshooting Tips

While implementing AV-HuBERT, you might encounter some challenges. Here are a few troubleshooting ideas:

- Model Performance Issues: If the recognition accuracy is not as expected, double-check your dataset for clarity and relevance. The quality of your input data is crucial for model performance.

- Dependency Errors: If you run into issues with dependencies, ensure that you are using the right versions as specified in the repository. Using an incorrect version may lead to unexpected behavior.

- Environment Configuration: Verify your development environment setup. If errors persist, consider creating a virtual environment specifically for this project.

- For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

In Conclusion

The AV-HuBERT model represents a significant advancement in audio-visual speech recognition, merging both auditory and visual cues to enhance understanding. At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.