Welcome to the world of Chinese-CLIP! This advanced model can link images with their descriptions, helping you to understand the semantic relationship between visual content and text in the Chinese language. In this article, we’ll take you through the steps to utilize the Chinese-CLIP model effectively and troubleshoot any issues you may encounter along the way.

Introduction

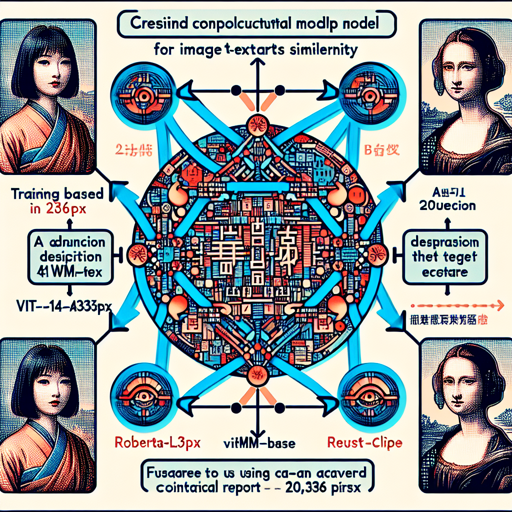

The Chinese-CLIP model employs the powerful ViT-L14@336px as its image encoder and the RoBERTa-wwm-base as the text encoder. It is trained on a magnificent dataset of approximately 200 million image-text pairs. If you’re eager to dive deeper into the technical details, check out our technical report here and visit our official GitHub repository to explore more!

Getting Started: Using the Official API

This section will guide you through the process of utilizing the Chinese-CLIP model with ease.

Step-by-Step Instructions

- First, ensure you have installed the necessary libraries:

- Python

- PIL

- transformers

- Next, you can implement the following code snippet:

from PIL import Image

import requests

from transformers import ChineseCLIPProcessor, ChineseCLIPModel

model = ChineseCLIPModel.from_pretrained("OFA-Sys/chinese-clip-vit-large-patch14-336px")

processor = ChineseCLIPProcessor.from_pretrained("OFA-Sys/chinese-clip-vit-large-patch14-336px")

url = "https://clip-cn-beijing.oss-cn-beijing.aliyuncs.com/pokemon.jpeg"

image = Image.open(requests.get(url, stream=True).raw)

# Squirtle, Bulbasaur, Charmander, Pikachu in Chinese

texts = ["杰尼龟", "妙蛙种子", "小火龙", "皮卡丘"]

# compute image features

inputs = processor(images=image, return_tensors="pt")

image_features = model.get_image_features(**inputs)

image_features = image_features / image_features.norm(p=2, dim=-1, keepdim=True) # normalize

# compute text features

inputs = processor(text=texts, padding=True, return_tensors="pt")

text_features = model.get_text_features(**inputs)

text_features = text_features / text_features.norm(p=2, dim=-1, keepdim=True) # normalize

# compute image-text similarity scores

inputs = processor(text=texts, images=image, return_tensors="pt", padding=True)

outputs = model(**inputs)

logits_per_image = outputs.logits_per_image # this is the image-text similarity score

probs = logits_per_image.softmax(dim=1) # probabilities

Understanding the Code: An Analogy

Think of the Chinese-CLIP model as a highly skilled translator in an art gallery. Each image is like a captivating artwork, and its respective description is akin to a curatorial note explaining the artwork’s significance.

In our code, we first allow the translator (the model) to study the artwork (the image) and understand its intricate details. Then, we share various descriptions of artworks (texts) with our translator. The translator compares the significance of each description with the artwork. After careful analysis, the translator determines how well each description reflects the artwork, presenting the findings in a ranked list of similarities. Just like a curator would recommend specific pieces to art lovers based on their interests!

Troubleshooting

If you encounter problems while using the Chinese-CLIP model, here are some troubleshooting steps that may help:

- Error Loading Images: Make sure the image URL is valid. You can test it by pasting it in your browser.

- Library Issues: Ensure that you have the latest version of the required libraries installed. Use pip to update them.

- Internet Connectivity: Ensure your Internet connection is stable, as fetching images requires good connectivity.

- If issues persist, do not hesitate to reach out to the community for support or explore the documentation on our GitHub repository.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Final Thoughts

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.

Conclusion

With this guide, you should be equipped to start using the Chinese-CLIP model effectively. By following the instructions and understanding the code through the analogy provided, you are now ready to explore the fascinating world of image-text relationships!