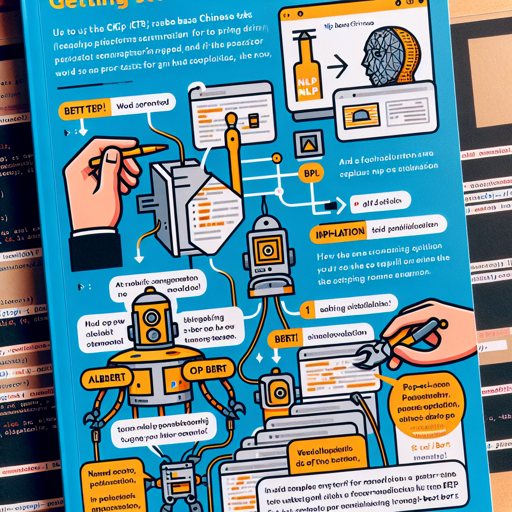

The CKIP BERT Base Chinese project provides a robust array of traditional Chinese transformers models alongside powerful NLP tools. This guide will walk you through setting up the CKIP BERT for your projects, utilizing models such as ALBERT, BERT, and GPT2, and incorporating essential NLP functionalities like word segmentation, part-of-speech tagging, and named entity recognition.

Getting Started

To jump into utilizing CKIP BERT, first ensure you have the necessary libraries installed. You’ll primarily be dealing with Hugging Face’s transformers library.

Installation

- Install the transformers package if you haven’t already:

pip install transformersUsage

When working with CKIP BERT, it’s important to note that you should use BertTokenizerFast rather than AutoTokenizer. Let’s break this down through an analogy:

Imagine you are preparing a delicate Chinese dish. The ingredients (word representation) are important, but so is the technique (tokenization method). Utilizing the right cooking method (like BertTokenizerFast) can significantly enhance the dish’s flavor (the quality of NLP tasks). On the other hand, using tools not suited for your dish could lead to less-than-desirable results.

Here’s a sample code snippet to illustrate how to use the CKIP BERT models:

from transformers import (

BertTokenizerFast,

AutoModel,

)

tokenizer = BertTokenizerFast.from_pretrained("bert-base-chinese")

model = AutoModel.from_pretrained("ckiplab/bert-base-chinese-pos")Where to Find More Information

For comprehensive usage and additional information regarding the CKIP project, visit their [Homepage].

Troubleshooting

If you encounter issues while implementing CKIP BERT models or using the NLP tools, here are some troubleshooting ideas:

- Ensure that the transformer library is up-to-date through

pip install --upgrade transformers. - Verify that you have a stable internet connection when downloading models.

- Check if the model names are correctly spelled and exist by referring to the official GitHub documentation.

- If you run into memory errors, consider reducing the batch size or using a model that has lower memory requirements.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Conclusion

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.