Are you ready to start your journey with one of the most innovative models in AI? Follow this guide to download and utilize Eric Hartford’s Wizard Vicuna 13B Uncensored model. This model is perfect for generating long contexts of text and is capable of providing comprehensive responses.

What You Need to Know

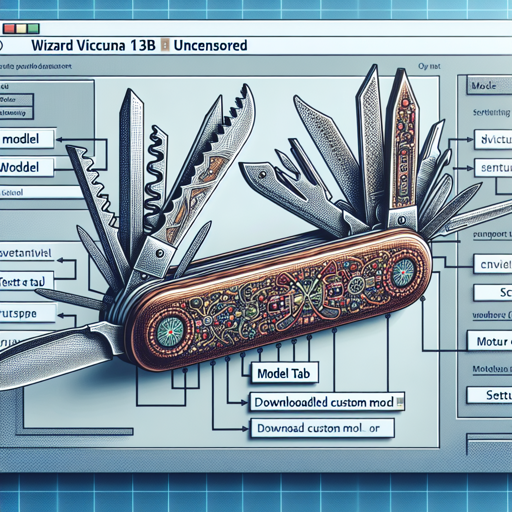

This model is based on a 4-bit format and includes support for up to 8K context size. It has been optimized using GPTQ-for-LLaMa, and continues to gain appreciation in the AI community for its capabilities. In essence, it’s like a Swiss Army knife for text generation— equipped to handle various complex tasks!

Downloading and Setting Up the Model in text-generation-webui

- Ensure that you have the latest version of text-generation-webui.

- Click the Model tab.

- Under Download custom model or LoRA, enter

TheBloke/Wizard-Vicuna-13B-Uncensored-SuperHOT-8K-GPTQ. - Click Download. You will see “Done” once it’s complete.

- Untick Autoload the model.

- Refresh the model list by clicking the refresh icon next to Model.

- Select your downloaded model from the dropdown.

- Set the Loader to ExLlama.

- Configure max_seq_len to 8192 or 4096, and set compress_pos_emb to 4 or 2 respectively.

- Click Save Settings and then Reload.

- Head to the Text Generation tab to begin by entering your prompt!

Using the Model from Python Code

Additionally, if you prefer coding, you can also invoke this model from Python. Think of your relationship with coding as like a chef gathering ingredients to create a dish; the right imports and configurations are essential to ensure your culinary masterpiece turns out great!

from transformers import AutoTokenizer, pipeline, logging

from auto_gptq import AutoGPTQForCausalLM, BaseQuantizeConfig

model_name_or_path = "TheBloke/Wizard-Vicuna-13B-Uncensored-SuperHOT-8K-GPTQ"

model_basename = "wizard-vicuna-13b-uncensored-superhot-8k-GPTQ-4bit-128g.no-act.order"

use_triton = False

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, use_fast=True)

model = AutoGPTQForCausalLM.from_quantized(model_name_or_path,

model_basename=model_basename,

use_safetensors=True,

trust_remote_code=True,

device_map='auto',

use_triton=use_triton,

quantize_config=None)

model.seqlen = 8192

prompt = "Tell me about AI"

prompt_template = f'USER: {prompt}ASSISTANT:'

input_ids = tokenizer(prompt_template, return_tensors='pt').input_ids.cuda()

output = model.generate(inputs=input_ids, temperature=0.7, max_new_tokens=512)

print(tokenizer.decode(output[0]))Troubleshooting Tips

While working with the Wizard Vicuna model, you might encounter some common issues. Here are some tips to help you out:

- If the model does not start after downloading, ensure you have the correct dependencies installed.

- Check that your VRAM usage is appropriate; if it’s running low, try using a smaller context length.

- If you receive errors regarding

config.json, verify that the settings reflect the values specified for your model. - For help and discussions, feel free to join TheBloke’s Discord server.

- For assistance with specific coding problems, seek out collaborations or insights at **[fxis.ai](https://fxis.ai/edu)**.

At **[fxis.ai](https://fxis.ai/edu)**, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.

Final Thoughts

Now that you are armed with the knowledge to successfully utilize Eric Hartford’s Wizard Vicuna 13B Uncensored model, why not dive in? With its robust capabilities, you can explore the vast potential of AI and text generation like never before.

For more insights, updates, or to collaborate on AI development projects, stay connected with **[fxis.ai](https://fxis.ai/edu)**.