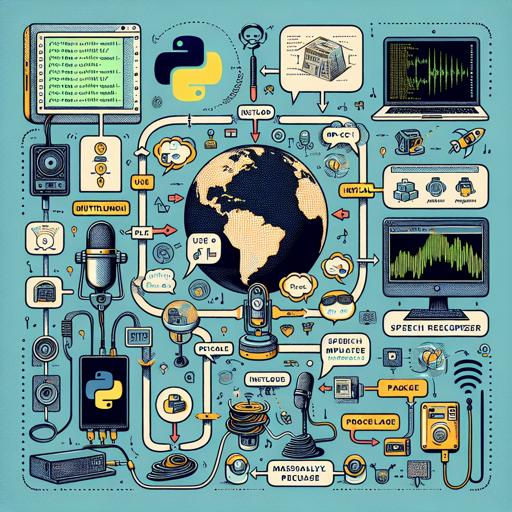

Welcome to an exciting exploration of M-CTC-T, a robust language processing model created by Meta AI. This guide aims to provide you with step-by-step instructions for using this massively multilingual speech recognizer, along with some troubleshooting tips to ease your journey.

What Is M-CTC-T?

M-CTC-T is a 1B-parameter transformer encoder designed for speech recognition across numerous languages. Trained on datasets such as Common Voice and VoxPopuli, this model is equipped for heavy lifting in understanding and interpreting human speech, making it a valuable tool in AI-based applications.

Getting Started with M-CTC-T

To start using M-CTC-T for audio transcription, you need the Python environment set up with necessary packages. Here’s how to do it:

Step 1: Install Required Libraries

- Ensure you have Python installed on your machine.

- Install PyTorch and the Transformers library using pip:

pip install torch torchaudio transformers datasetsStep 2: Load the Model

Next, you will load the M-CTC-T model and its processor:

import torch

import torchaudio

from datasets import load_dataset

from transformers import MCTCTForCTC, MCTCTProcessor

model = MCTCTForCTC.from_pretrained('speechbrain/m-ctc-t-large')

processor = MCTCTProcessor.from_pretrained('speechbrain/m-ctc-t-large')Step 3: Prepare Your Dataset

Load your audio dataset:

ds = load_dataset('patrickvonplaten/librispeech_asr_dummy', 'clean', split='validation')Step 4: Feature Extraction and Transcription

Extract features and transcribe your audio:

input_features = processor(ds[0]['audio']['array'],

sampling_rate=ds[0]['audio']['sampling_rate'],

return_tensors='pt').input_features

with torch.no_grad():

logits = model(input_features).logits

predicted_ids = torch.argmax(logits, dim=-1)

transcription = processor.batch_decode(predicted_ids)Understanding the Code: An Analogy

Think of using M-CTC-T like cooking a complicated dish. Here’s how:

- Ingredients (Model & Processor): You start by gathering your main ingredients, which are the model and processor — just like you need the right ingredients to cook.

- Recipe (Loading Dataset): The next step is following your recipe. Loading the dataset is akin to preparing your mise en place — getting everything ready before you start cooking.

- Cooking (Feature Extraction and Transcribing): Finally, you begin mixing and cooking your ingredients according to the steps given. In this model, feature extraction and transcribing play this role, where everything combines to create the final dish — the transcription!

Troubleshooting Tips

If you encounter issues, here are some suggestions:

- Ensure that the audio files are in the supported format.

- Check if your environment has the correct packages installed.

- Examine the sample audio length; very short or very long files can produce erratic results.

For further assistance, feel free to open a discussion or pull request in the relevant GitHub repo and tag the contributors. For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Conclusion

M-CTC-T opens the door for advanced multilingual speech recognition capabilities. With a few straightforward steps, you can transcribe audio files efficiently and tackle a variety of applications in natural language processing.

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.

References

- Paper

- A huge thanks to Chan Woo Kim for porting the model from Flashlight C++ to PyTorch.