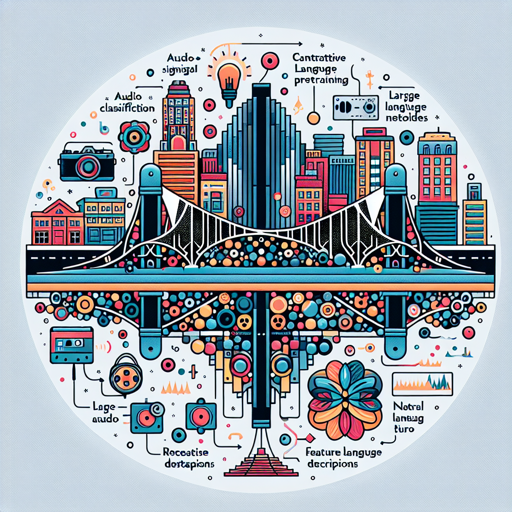

In the rapidly evolving field of multimodal representation learning, the CLAP (Contrastive Language-Audio Pretraining) model stands tall as a bridge between audio signals and language. This blog will guide you through the essentials of using this innovative model, from installation to troubleshooting.

Table of Contents

TL;DR

The CLAP model effectively integrates audio data with natural language descriptions, achieving impressive results in tasks such as text-to-audio retrieval and audio classification. It leverages a large dataset known as LAION-Audio-630K and employs various feature fusion techniques.

Model Details

The CLAP model uses contrastive learning to create robust audio representations. This model is capable of processing variable-length audio inputs and exhibits state-of-the-art performance across different audio-related tasks.

Usage

To utilize the CLAP model for zero-shot audio classification or to extract audio and textual features, follow the steps below:

Uses

Perform Zero-Shot Audio Classification

Using the pipeline function in Python, you can classify audio without prior training:

python

from datasets import load_dataset

from transformers import pipeline

dataset = load_dataset("ashraqesc50")

audio = dataset["train"]["audio"][-1]["array"]

audio_classifier = pipeline(task="zero-shot-audio-classification", model="laion/clap-htsat-unfused")

output = audio_classifier(audio, candidate_labels=["Sound of a dog", "Sound of vacuum cleaner"])

print(output)

This code snippet loads an audio sample and classifies it, giving you the output in terms of scores for the possible labels.

Run the Model

You can also obtain audio and text embeddings using CLAPModel. Below are the instructions for running the model:

Run the Model on CPU

python

from datasets import load_dataset

from transformers import ClapModel, ClapProcessor

librispeech_dummy = load_dataset("hf-internal-testing/librispeech_asr_dummy", "clean", split="validation")

audio_sample = librispeech_dummy[0]

model = ClapModel.from_pretrained("laion/clap-htsat-unfused")

processor = ClapProcessor.from_pretrained("laion/clap-htsat-unfused")

inputs = processor(audios=audio_sample["audio"]["array"], return_tensors="pt")

audio_embed = model.get_audio_features(**inputs)

Run the Model on GPU

python

from datasets import load_dataset

from transformers import ClapModel, ClapProcessor

librispeech_dummy = load_dataset("hf-internal-testing/librispeech_asr_dummy", "clean", split="validation")

audio_sample = librispeech_dummy[0]

model = ClapModel.from_pretrained("laion/clap-htsat-unfused").to(0)

processor = ClapProcessor.from_pretrained("laion/clap-htsat-unfused")

inputs = processor(audios=audio_sample["audio"]["array"], return_tensors="pt").to(0)

audio_embed = model.get_audio_features(**inputs)

Citation

If you plan to use this model for your research, please consider citing the original paper:

@misc{wu2022large, title={Large-scale Contrastive Language-Audio Pretraining with Feature Fusion and Keyword-to-Caption Augmentation}, author={Yusong Wu and Ke Chen and Tianyu Zhang and Yuchen Hui and Taylor Berg-Kirkpatrick and Shlomo Dubnov}, journal={arXiv preprint arXiv:2211.06687}, year={2022}, doi={10.48550/arxiv.2211.06687}, url={https://arxiv.org/abs/2211.06687}}

Troubleshooting

When using the CLAP model, you may encounter a few common issues. Here are some tips to help you troubleshoot:

- Performance Issues: Ensure that you have adequate computational resources. If you’re running on CPU, consider switching to a powerful GPU for better efficiency.

- Data Loading Errors: Double-check that your dataset path is correct and that you have the necessary permissions to access it.

- Installation Problems: Verify that your `transformers` library is updated to the latest version to avoid any compatibility issues.

- Incompatibility Errors: Ensure that your Python environment has all required libraries installed. Consider creating a virtual environment to manage dependencies effectively.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.