In the world of natural language processing (NLP), DistilBERT is like a nimble gazelle among heavyweights, offering a faster and lighter approach to understanding text. If you’ve decided to explore the capabilities of the distilbert-base-uncased_proba model, you’re in for an exciting journey! In this blog, we’ll guide you through the usage of this fine-tuned model, its training parameters, and some troubleshooting tips.

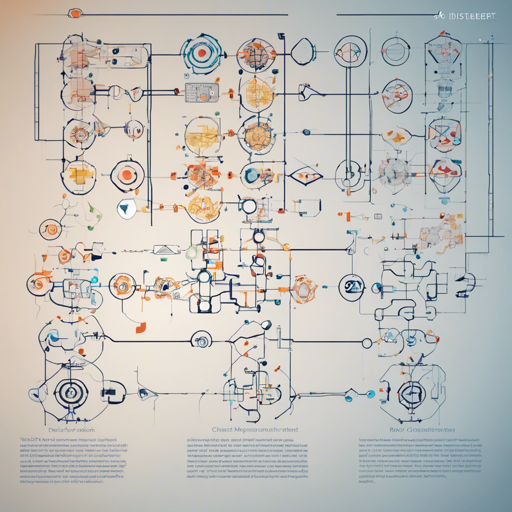

What is DistilBERT?

DistilBERT is a distilled version of BERT (Bidirectional Encoder Representations from Transformers), which has been modified to reduce its size while preserving a significant amount of its understanding of language. Think of it as a classic novel that has been summarized into a gripping novella — retaining the plot but making it easier to read.

Model Description

The distilbert-base-uncased_proba model is fine-tuned on an unspecified dataset. Unfortunately, some details about the model’s specific applications, limitations, and training data are still pending, so additional information is needed.

Training Procedure

This model’s journey to functionality was paved with specific training hyperparameters:

- Learning Rate: 2e-05

- Train Batch Size: 8

- Eval Batch Size: 8

- Seed: 42

- Optimizer: Adam with betas=(0.9, 0.999) and epsilon=1e-08

- Learning Rate Scheduler: Linear

- Number of Epochs: 2

Framework Versions

The model is built using the following tools:

- Transformers: 4.25.1

- Pytorch: 1.14.0.dev20221202

- Datasets: 2.7.1

- Tokenizers: 0.13.2

Troubleshooting Tips

If you encounter any issues while using the distilbert-base-uncased_proba model, here are some troubleshooting ideas:

- Issue: Model performance is not as expected.

Ensure that you are using a quality dataset that fits the tasks you’re addressing. A poor dataset can often lead to subpar results. - Issue: Errors during installation or running the model.

Verify that you have the correct versions of the required libraries and tools listed above. Update them if necessary. - Issue: No outputs generated.

Check your input dimensions and ensure that the data being fed into the model meets the required format. Something as trivial as extra whitespace can interfere.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Final Thoughts

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.