In today’s world of AI language processing, understanding the nuances of conversational tone has become crucial. We’ll dive into how to implement a Response Toxicity Classifier model from Skoltech, specifically designed for evaluating Russian conversational messages. This guide will take you step by step through using the model effectively.

Getting Started

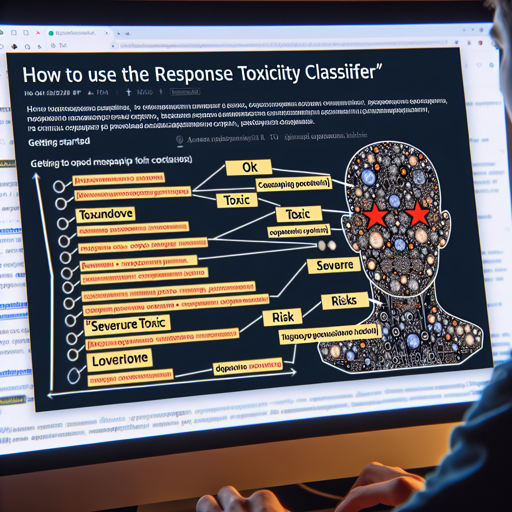

This model has been trained to classify messages on four different toxicity levels:

- OK: The message is acceptable and not offensive.

- Toxic: The message could be perceived as offensive in context.

- Severe Toxic: The message is highly offensive, designed to provoke discomfort.

- Risks: The message addresses sensitive topics and could harm the speaker’s reputation.

Setup Requirements

To use this classifier effectively, you need the following:

- Python environment with pip installed

- Transformers library from Hugging Face

- Basic understanding of Python programming

Installation

First, ensure that you have the necessary packages installed. Use the following command in your terminal:

pip install torch transformersUsing the Model

Now that your environment is set up, let’s implement the Response Toxicity Classifier. We’ll break it down using an analogy. Imagine you are a quality control inspector in a food processing plant. Just like checking ingredients for quality and safety ensures only the best products reach customers, this model inspects messages for their toxicity level before delivering them to the audience.

The following code snippet showcases how to use the model:

import torch

from transformers import AutoTokenizer, AutoModelForSequenceClassification

# Initialize the tokenizer and model

tokenizer = AutoTokenizer.from_pretrained("tinkoff-ai/response-toxicity-classifier-base")

model = AutoModelForSequenceClassification.from_pretrained("tinkoff-ai/response-toxicity-classifier-base")

# Prepare the input message

inputs = tokenizer(["[CLS]привет[SEP]привет![SEP]как дела?[RESPONSE_TOKEN]норм, у тя как?"],

max_length=128, add_special_tokens=False, return_tensors='pt')

# Get predictions

with torch.inference_mode():

logits = model(**inputs).logits

probas = torch.softmax(logits, dim=-1)[0].cpu().detach().numpy()

This code initializes the model, prepares a sample Russian message, and predicts its toxicity probabilities. Like a food sample undergoing rigorous testing, the message gets evaluated for its appropriateness before being served to the consumers.

Troubleshooting

If you encounter issues while running your code, consider the following troubleshooting tips:

- Ensure Proper Installation: Verify that the libraries are correctly installed by running the command

pip listin your terminal. - Check Model Name: Ensure that you have specified the correct model name when loading the tokenizer and model.

- Input Format: Double-check the format of your input message to ensure it aligns with the expected structure.

- Memory Issues: If you face memory-related errors, try reducing the batch size or closing other applications to free up resources.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Conclusion

In this blog, we explored how to employ the Response Toxicity Classifier from Skoltech to evaluate conversational messages in Russian. Efficiently integrating AI models such as this one enhances our ability to maintain respectful and safe communication in digital spaces. At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.