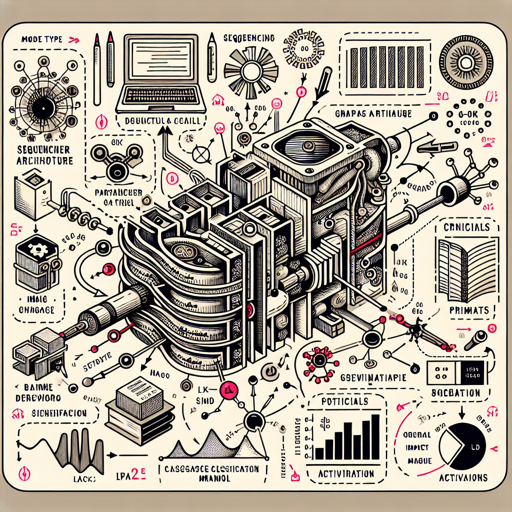

The Sequencer2D model is an innovative image classification model built on LSTM architecture, trained on the ImageNet-1k dataset. This tutorial will guide you through the steps of using this model for various image processing tasks. Ready to dive in? Let’s get started!

Model Details

- Model Type: Image classification feature backbone

- Model Stats:

- Parameters (M): 54.3

- GMACs: 9.7

- Activations (M): 22.1

- Image Size: 224 x 224

- Papers:

- Sequencer: Deep LSTM for Image Classification: Link

- Dataset: ImageNet-1k

- Original Code: Link

Using the Model

The Sequencer2D model can be employed in various ways—whether you’re classifying images, extracting feature maps, or generating embeddings. Below, I have segmented usage scenarios for clarity.

1. Image Classification

To classify images, you will need the following code:

python

from urllib.request import urlopen

from PIL import Image

import timm

# Load Image

img = Image.open(urlopen('https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'))

# Create Model

model = timm.create_model('sequencer2d_l.in1k', pretrained=True)

model = model.eval()

# Get model specific transforms

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

# Model Prediction

output = model(transforms(img).unsqueeze(0))

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

Here, we grab an image from a URL, preprocess it, and obtain the top 5 predicted classes along with their probabilities. Think of it like a chef tasting a dish and picking the top five favorite flavors!

2. Feature Map Extraction

For extracting feature maps from the model, use this code segment:

python

from urllib.request import urlopen

from PIL import Image

import timm

# Load Image

img = Image.open(urlopen('https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'))

# Create Model

model = timm.create_model('sequencer2d_l.in1k', pretrained=True, features_only=True)

model = model.eval()

# Get model specific transforms

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

# Feature Map Output

output = model(transforms(img).unsqueeze(0))

for o in output:

print(o.shape)

In this scenario, we extract feature maps—the fundamental “ingredients” for classification. The shape of each feature map tells us how the model interprets different depths of the image.

3. Image Embeddings

Lastly, you can extract image embeddings using this code:

python

from urllib.request import urlopen

from PIL import Image

import timm

# Load Image

img = Image.open(urlopen('https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'))

# Create Model

model = timm.create_model('sequencer2d_l.in1k', pretrained=True, num_classes=0)

model = model.eval()

# Get model specific transforms

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

# Generate Image Embeddings

output = model(transforms(img).unsqueeze(0))

output = model.forward_features(transforms(img).unsqueeze(0))

output = model.forward_head(output, pre_logits=True)

Image embeddings distill an image into a compact representation, similar to a summary that captures the essence of a full story.

Troubleshooting

If you encounter any issues while using the Sequencer2D model, consider the following troubleshooting tips:

- Model Loading Issues: Ensure that you have the correct version of the timm library installed. Update it using:

pip install --upgrade timm. - Image Download Problems: Confirm that the image URL is correct and that your internet connection is stable. Try accessing it directly in a browser to check.

- Shape Mismatch Errors: Double-check that your input image dimensions match what the model expects (224×224 pixels).

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Conclusion

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.