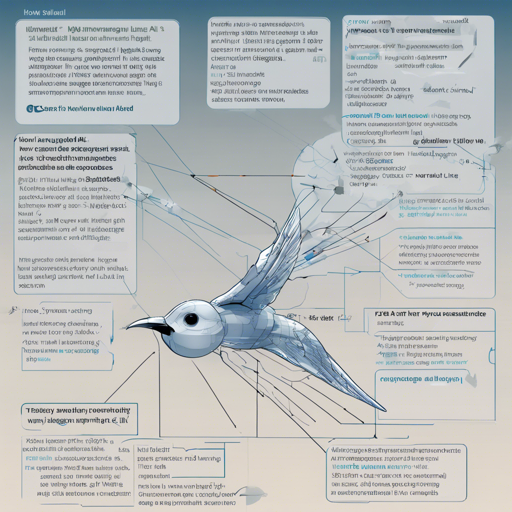

The Swallow-MS-7b-v0.1 model is designed to leverage the capabilities of large language models for enhanced text generation, particularly with a focus on Japanese language data. Below is a user-friendly guide on how to effectively utilize this remarkable model.

Getting Started with Swallow-MS-7b-v0.1

To harness the potential of the Swallow-MS-7b-v0.1 model, follow these simple steps:

- Installation: Begin by installing the required dependencies. You can easily do this by running the following command in your terminal:

pip install -r requirements.txtimport torch

from transformers import AutoTokenizer, AutoModelForCausalLM

model_name = "tokyotech-llm/Swallow-MS-7b-instruct-v0.1"

model = AutoModelForCausalLM.from_pretrained(model_name, torch_dtype=torch.bfloat16, device_map="auto")

tokenizer = AutoTokenizer.from_pretrained(model_name)messages = [

{"role": "system", "content": ""},

{"role": "user", "content": ""}

]

encodeds = tokenizer.apply_chat_template(messages, return_tensors="pt")

model_inputs = encodeds.to(device)generated_ids = model.generate(model_inputs, max_new_tokens=128, do_sample=True)

decoded = tokenizer.batch_decode(generated_ids)

print(decoded[0])Understanding the Code: An Analogy

Think of using the Swallow-MS-7b-v0.1 model like preparing a special recipe in a kitchen:

- Gathering Ingredients (Installation): Just as you need the right ingredients for a dish, you must ensure all dependencies are installed. This is your foundation.

- Preparing Your Kitchen (Model Initialization): Just like setting up your kitchen with tools and utensils, you import necessary libraries and initialize the model.

- Mixing Ingredients (Preparing Input): To make your dish appealing, you blend your ingredients meticulously. Similarly, structuring your input in the proper format is crucial for optimal performance.

- Cooking (Generating Output): Finally, after mixing, you cook your dish to perfection. The model generates an output based on your structured input, yielding results, akin to tasting a ready-made meal.

Troubleshooting Tips

If you encounter any issues while using the model, consider the following troubleshooting ideas:

- Ensure that your Python environment has all the required packages updated and installed correctly.

- Check your code for syntactical errors, especially in the input formatting. Following the instruction format strictly is vital.

- If you notice that the generated output is less than satisfactory, revisit your input messages and refine them for clarity.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Conclusion

By following this guide, you can tap into the power of the Swallow-MS-7b-v0.1 model effectively and efficiently. It is always evolving, so stay tuned for future enhancements and best practices.

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.