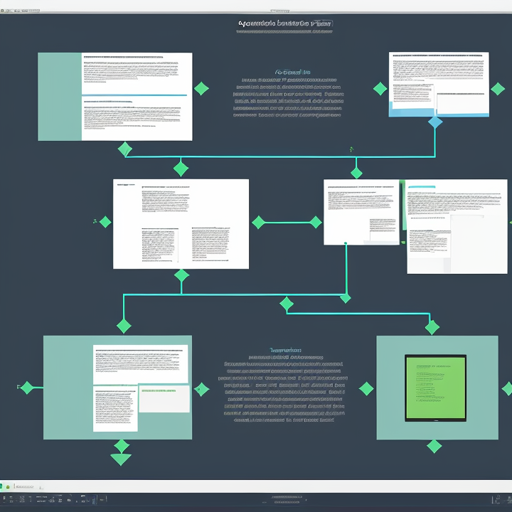

In the realm of AI and machine learning, the ability to parse and understand documents is essential. The layoutlmv2-base-uncased-finetuned-docvqa model, fine-tuned on an undisclosed dataset, specializes in this area. In this guide, we will walkthrough its features, training procedure, and how to leverage it for your document question-answering needs.

Model Overview

The layoutlmv2-base-uncased-finetuned-docvqa model is a modified version of the [microsoft layoutlmv2-base-uncased](https://huggingface.comicrosoftlayoutlmv2-base-uncased), tailored specifically for Document Visual Question Answering (DocVQA). With a training loss of 1.1940, it’s ready to tackle various document-related tasks.

How to Train the Model

Training a model is akin to preparing for a marathon. It’s not just about running—it involves careful planning and execution. Let’s break down the training steps and hyperparameters involved, allowing you to understand how to set your model for success.

Training Procedure

- Learning Rate: 5e-05 – think of this as the pace at which you increase your training intensity.

- Batch Sizes:

- Train Batch Size: 8

- Eval Batch Size: 8

- Seed: 250500 – this is your unique starting point for reproducibility.

- Optimizer: Adam, with betas set at (0.9, 0.999) and epsilon at 1e-08 – like trusty running shoes, this keeps stability and acceleration steady.

- Learning Rate Scheduler: Linear – think of it as having a built-in pacer to prevent burnout.

- Epochs: 2 – the total laps around the track for training.

Model Performance

The training yielded various validation loss results through epochs, showcasing how the model learns progressively.

| Epoch | Training Loss | Validation Loss | Step |

|---|---|---|---|

| 1 | 1.463 | 1.627 | 1000 |

| 2 | 0.9447 | 1.364 | 2000 |

| 3 | 0.7725 | 1.06 | 3000 |

| 4 | 0.4382 | 1.33 | 5000 |

| 5 | 0.4515 | 1.59 | 6000 |

| 6 | 1.1860 | 1.86 | 7000 |

| 7 | 1.1940 | – | – |

Troubleshooting Tips

If you encounter issues while using or training the model, consider the following troubleshooting steps:

- Loss Not Improving: Check your learning rate and consider adjusting it. Too high or too low can affect learning.

- Memory Errors: Reduce batch sizes if you run into out-of-memory issues during training.

- Inconsistent Results: Ensure that you have set a random seed for consistent reproducibility.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Conclusion

By following the detailed steps outlined in this blog, you can successfully implement the layoutlmv2-base-uncased-finetuned-docvqa model for your document processing tasks. Whether you are developing chatbots for customer service or enhancing document retrieval, this model can significantly streamline your workflow.

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.