The RoBERTa-Base-Wechsel-Swahili model is designed for effective cross-lingual transfer, making it easier to apply powerful language models to Swahili. In this article, we will walk you through the process of using this model, exploring its performance, and providing troubleshooting tips along the way.

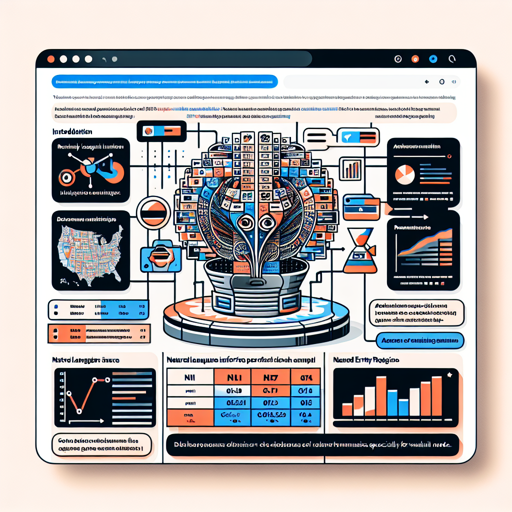

Performance Overview

This model leverages an innovative method called WECHSEL to efficiently transfer pretrained language models to new languages, including Swahili. Here’s a summary of its performance against other models:

RoBERTa Performance

Model

NLI Score

NER Score

Avg Score

roberta-base-wechsel-swahili

75.05

87.39

81.22

xlm-roberta-base

69.18

87.37

78.28

GPT-2 Performance

Model

PPL

gpt2-wechsel-swahili

10.14

gpt2 (retrained from scratch)

10.58

Understanding the Model with an Analogy

Imagine you’re building a library. Each language has its own section, but you want to borrow books that are available only in a different language. To accomplish this, you need a method to translate the titles and contents effectively without rewriting every book. This is precisely what WECHSEL does for language models. It takes knowledge from the English-language books (models) and makes it accessible in Swahili by effectively reinterpreting (initializing) the new section with the existing information. It allows transferring the wisdom of large, expensive English models to Swahili, making it a more efficient process.

Steps to Use the RoBERTa-Base-Wechsel-Swahili Model

- Begin by visiting the code repository at GitHub.

- Clone the repository using git:

git clone https://github.com/CPJKU/wechselcd wechselfrom transformers import AutoModelForSequenceClassification, AutoTokenizer

model_name = "roberta-base-wechsel-swahili"

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForSequenceClassification.from_pretrained(model_name)Troubleshooting Tips

If you encounter any issues while using the model, here are some tips to help you out:

- Error loading model: Ensure the model name is correct and that you are connected to the internet.

- Dependency issues: Double-check the list of required libraries in the README file and ensure they are installed properly.

- Performance not as expected: Every model has its limitations! Experiment with fine-tuning to achieve better results.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Conclusion

The RoBERTa-Base-Wechsel-Swahili model offers an innovative approach to language model transfer, ensuring better accessibility for tasks involving Swahili. By utilizing WECHSEL, the strengths of English models are brought into the Swahili language, enhancing the future of NLP applications. At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.