The rise of artificial intelligence has made it possible to process language in remarkably innovative ways. One such remarkable technology is Facebook’s Wav2Vec2 XLS-R, a multilingual pretrained model designed for speech processing. This guide will walk you through the essentials of Wav2Vec2 XLS-R, its capabilities, and how to troubleshoot common issues when using it.

Understanding XLS-R: A Brief Overview

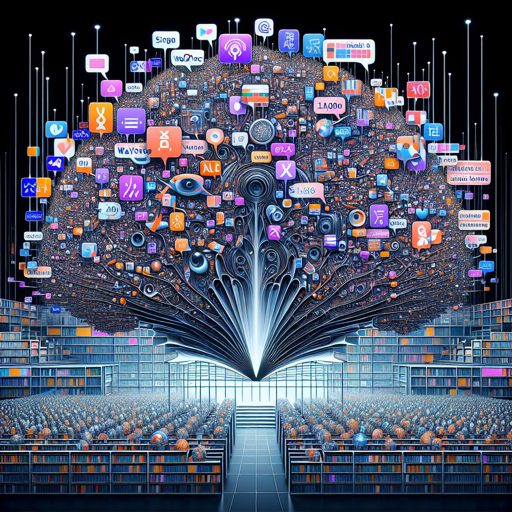

XLS-R (XLM-R for Speech) is a large-scale model trained with a whopping **1 billion parameters** on 436,000 hours of unlabeled speech data across 128 languages. Imagine having a library containing every book in the world but instead filled with countless voices and languages. The model learns from these voices, enabling it to understand and process various speech tasks.

By using the wav2vec 2.0 objective, XLS-R leverages a vast amount of data to train itself, thus catering to applications such as Automatic Speech Recognition (ASR), translation, and classification.

Setting Up Wav2Vec2 XLS-R

To make use of the XLS-R model, follow these steps:

- Ensure Your Input is Compatible: Your speech input should be sampled at 16kHz. This is crucial as it ensures optimal performance of the model.

- Fine-Tune the Model: Depending on your specific task, you may need to fine-tune the model. For details, check this blog.

- Choose the Right Model Version: Depending on your resources, select the appropriate version of XLS-R. The options available are:

Using XLS-R in Various Applications

XLS-R can be used for a variety of applications such as:

- Automatic Speech Recognition (ASR)

- Translation of speeches across different languages

- Classification of audio data

For efficient usage, you may refer to a Google Colab setup available here.

Troubleshooting Common Issues

While using Wav2Vec2 XLS-R, you might encounter some challenges. Here are some tips to resolve potential issues:

- Audio Quality: Ensure that your input audio file is clear. Poor audio input can lead to subpar recognition results.

- Incorrect Sampling Rate: Double-check that your audio is at 16kHz. If it’s not, you will need to resample your audio.

- Model Not Performing as Expected: If the model isn’t yielding desired results, consider re-evaluating your fine-tuning process. Ensure that your training dataset is varied and representative.

- For assistance and collaboration ideas in AI development, stay connected with fxis.ai.

The Future of Multilingual Speech Processing

As technology advances, models like XLS-R are paving the way for more inclusive multilingual applications in speech processing. By leveraging large-scale models, we can significantly improve speech recognition and translation tasks across various languages.

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.