In recent times, the LLaMA model stands out as an impressive advancement in the realm of natural language processing. Developed by the FAIR team of Meta AI, this auto-regressive language model embodies the transformative power of artificial intelligence. In this blog, we’ll guide you through understanding and utilizing the LLaMA model effectively.

Understanding LLaMA Model Architecture

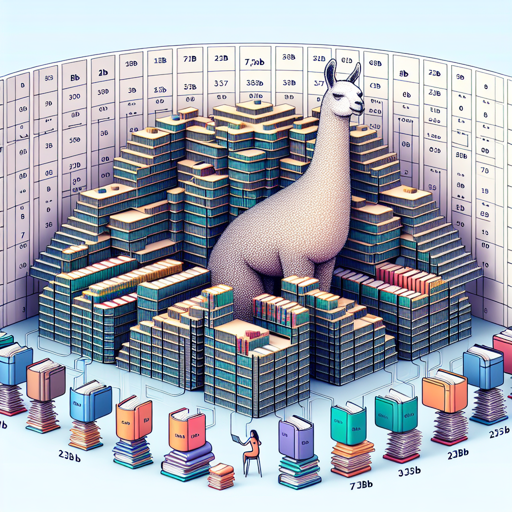

At its core, LLaMA utilizes a transformer architecture akin to a sophisticated library. Picture a massive library where each section contains varying genres of literature. Each book (parameters) is meticulously arranged in aisles (layers) and managed by a librarian (model) that helps you retrieve information efficiently. LLaMA comes in different sizes—7B, 13B, 33B, and 65B parameters—indicating the number of books in our library.

Getting Started With LLaMA

- Installation: Ensure you have the Hugging Face Transformers library installed.

- Loading the Model: Use the Hugging Face API to load the model using the following code:

from transformers import LLaMAForCausalLM

model = LLaMAForCausalLM.from_pretrained("path/to/model")

Intended Use Cases

LLaMA is primarily intended for research purposes. You can explore applications such as:

- Question Answering

- Natural Language Understanding

- Reading Comprehension

However, it’s crucial to note that LLaMA should not be used directly for applications without informed risk assessment, as it can produce biased or potentially harmful content.

Troubleshooting Common Issues

While working with LLaMA, you may encounter several issues. Here are some troubleshooting steps:

- Issue: Model Fails to Load

Solution: Check your path and ensure you are using the correct model size. Verify your Hugging Face library version is up-to-date.

- Issue: Biased Outputs

Solution: Refine your prompts and preprocess your data to mitigate biases. Consider using mitigations outlined in the ethical considerations.

- Performance Issues:

If you experience slow performance, consider reducing the batch size or using a smaller model variant.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Understanding Model Performance

The effectiveness of LLaMA can vary based on several factors:

- Language Used: While LLaMA supports multiple languages, it’s primarily trained on English text, impacting its performance.

- Evaluation Metrics: The model’s accuracy is measured against benchmarks and specific datasets such as BoolQ, PIQA, and more.

Final Thoughts

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.

By harnessing the potential of LLaMA while being aware of its limitations, you’re on the right path to advancing your research and understanding in natural language processing. Happy exploring!