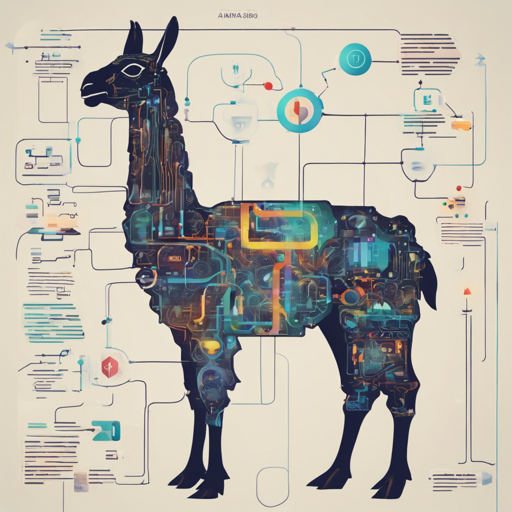

In this blog post, we’ll delve into the remarkable capabilities of the Llama-3-8B-Instruct model, specifically its extension to handle a context length of up to 80,000 tokens using QLoRA. Let’s explore how to get started with this versatile text generation model, review its performance, and offer some troubleshooting ideas for smooth implementation.

Introduction to Llama-3-8B-Instruct

The Llama-3-8B-Instruct model is a powerful tool designed for long-context generation tasks. By merging crucial models and efficient training methods, this model achieves impressive efficiencies, operating for just 8 hours on an 8xA800 (80G) machine while yielding high performance across various benchmarks.

How to Set Up and Use the Model

Follow these steps to set up the Llama-3-8B-Instruct-80K-QLoRA-Merged model on your machine:

- Prerequisites: Ensure you have the necessary Python environment with the required libraries installed. You will need:

- torch==2.2.2

- flash_attn==2.5.6

- transformers==4.39.3

- Load the Model: Use the following code to import and instantiate the model.

python import json import torch from transformers import AutoModelForCausalLM, AutoTokenizer model_id = "namespace-PtLlama-3-8B-Instruct-80K-QLoRA-Merged" torch_dtype = torch.bfloat16 device_map = "auto" tokenizer = AutoTokenizer.from_pretrained(model_id) model = AutoModelForCausalLM.from_pretrained( model_id, torch_dtype=torch.bfloat16, device_map=device_map, attn_implementation="flash_attention_2" ).eval() - Input and Output Handling: You can now generate text with both short and long contexts. See the code below to send input and receive output.

python with torch.no_grad(): # Handle short context messages = [{"role": "user", "content": "Tell me about yourself."}] inputs = tokenizer.apply_chat_template(messages, tokenize=True, add_generation_prompt=True, return_tensors="pt", return_dict=True).to("cuda") outputs = model.generate(**inputs, max_new_tokens=50) print(f"Input Length: {inputs['input_ids'].shape[1]}") print(f"Output: {tokenizer.decode(outputs[0])}") # Handle long context with open("data/narrativeqa.json", encoding='utf-8') as f: example = json.load(f) messages = [{"role": "user", "content": example["context"]}] inputs = tokenizer.apply_chat_template(messages, tokenize=True, add_generation_prompt=True, return_tensors="pt", return_dict=True).to("cuda") outputs = model.generate(**inputs, do_sample=False, top_p=1, temperature=1, max_new_tokens=20) print(f"Input Length: {inputs['input_ids'].shape[1]}") print(f"Prediction: {tokenizer.decode(outputs[0])}")

Performance Evaluation

The Llama-3-8B-Instruct-80K-QLoRA-Merged model was evaluated on various tasks with impressive results. For instance:

- Needle in a Haystack: Achieved optimal performance with a context length of 80K.

- LongBench: Displayed remarkable statistics for tasks including Single-Doc QA and Multi-Doc QA.

- MMLU Benchmark: Showed competitive zero-shot performance on various subjects.

Troubleshooting Tips

If you encounter issues while working with this model, consider the following troubleshooting tips:

- Model Length Warning: If you see warnings about exceeding the predefined maximum length, this is often just a heads-up and can be ignored.

- Gradients on GPU: Make sure your GPU is appropriately utilized. Use

device_map = "auto"to assist with this. - Memory Errors: If you run into memory issues, consider reducing the context length or batch sizes.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

Conclusion

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.