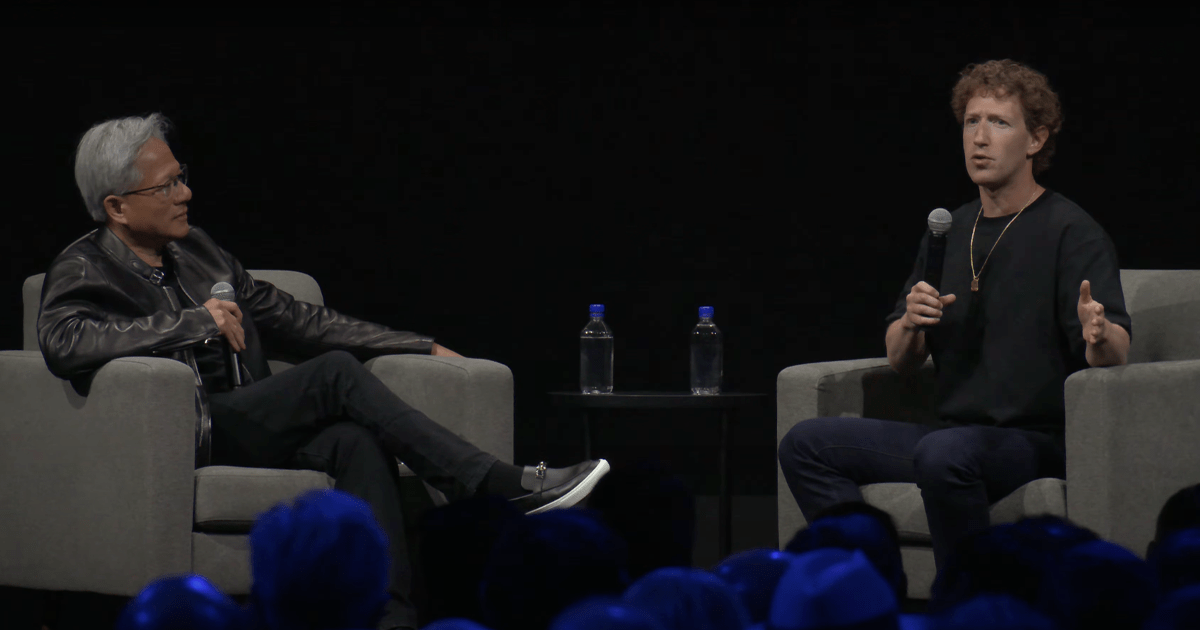

In the fast-evolving landscape of artificial intelligence and machine learning, Meta has taken a remarkable stride forward, unveiling Segment Anything 2 (SA2) at the recent SIGGRAPH conference. Co-hosted by CEO Mark Zuckerberg and Nvidia’s head honcho, Jensen Huang, this innovation is not just a leap forward; it’s a paradigm shift in how we engage with video content. With the prowess of machine learning backed by sophisticated technology, SA2 promises to redefine video analysis by introducing a level of precision previously confined to still images.

The Power of Segmentation

Understanding the term “segmentation” is key to appreciating this innovation. At its core, segmentation involves a machine learning model’s ability to distinguish and label different elements within a visual frame. For instance, it can successfully identify objects and scenes in a nuanced manner—transforming the phrase “this is a dog, this is a tree behind the dog” into a practical reality. With decades of evolution behind it, segmentation has now reached remarkable speed and accuracy, with SA2 standing as a significant milestone.

Segment Anything 2: The Next Step

Meta’s latest venture seeks to extend the parameters of Segment Anything beyond static images, harnessing it for real-time video processing. While the original Segment Anything model could analyze video footage by dissecting each frame individually, SA2 seamlessly integrates this technology into a cohesive video analysis feature. Essentially, it enables users to define what they want to observe within video content without the need for extensive individual frame processing—a feat that showcases how far we’ve come in just one year.

Real-World Applications and Scientific Insights

One of the most exciting potentials of SA2 lies in its applications across various fields. As Zuckerberg mentioned, this technology can enhance scientific research, allowing scientists to monitor ecological systems or study unique environments like coral reefs with unprecedented efficiency. Imagine unraveling the complexities of underwater ecosystems or tracking wildlife in their natural habitats—all through video sourced analysis without any prior training. This advancement paves the way for more streamlined research methods, empowering scientists with insights based on real-time data.

The Robust Infrastructure Behind SA2

Of course, such innovative technology does not come without its challenges. Processing video is inherently more demanding than analyzing images, which is why SA2 represents a culmination of technical prowess and resource optimization. The model necessitates substantial hardware capabilities, but the developments in computational efficiency have made it viable. Moreover, the ongoing strides across the AI industry ensure that the heavy lifting will not overwhelm data centers, a hurdle that plagued earlier models.

Open Source Philosophy and Community Engagement

One of the notable aspects of Meta’s approach is its dedication to openness. SA2, similar to its predecessor, is designed to be open and free for users to leverage. In a game-changing move, Meta is complementing this with the release of a database housing 50,000 annotated videos tailored specifically for training purposes. However, the question remains regarding the extensive library of an additional 100,000 videos referenced in their training—currently, this wealth of data appears to be a closely guarded asset. Speculations point towards the possibility of this dataset being sourced from public social media profiles.

Intentions Behind Transparency

While Meta celebrates its role as a leader in the open AI domain, Zuckerberg candidly remarked that the motivation behind this animosity isn’t purely benevolent. Instead, the push for open-source software is rooted in pragmatism—it creates an environment where these tools can flourish and deliver their best potential. The message is clear: openness isn’t merely a virtue; it’s a tactical approach to building powerful ecosystems around sophisticated AI technologies.

Conclusion: A New Horizon in Video AI

In conclusion, Segment Anything 2 emerges as a harbinger of what is possible when cutting-edge technology meets a commitment to open-source development. Its announcement not only represents a step forward in efficiency and effectiveness but also opens doors to myriad real-world applications. As industries begin to adopt this technology, we can anticipate profound changes in how we understand and engage with video content.

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations. For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.