Paper • ⏬ Base nach0 • ⏬ Large nach0

Overview

nach0 is a cutting-edge model that operates across multiple domains and tasks, specifically designed to interpret both natural and chemical languages. It excels by being pre-trained on vast amounts of unlabeled text derived from scientific literature, patents, and molecule strings. This broad range of training enables it to possess an extensive repertoire of chemical and linguistic knowledge.

The journey of nach0 didn’t end with general training; we further honed its abilities through instruction tuning, which involves using specific guidelines tailored for particular tasks. We harnessed the power of the NeMo framework to facilitate effective parallel optimization, allowing both base and large versions of the model to shine.

Our extensive experiments have demonstrated that nach0 significantly outperforms prevailing baselines across various tasks, whether single-domain or cross-domain. It showcases the unique ability to produce high-quality outputs in both molecular and textual formats, proving its worth in diverse multi-domain applications.

Tasks

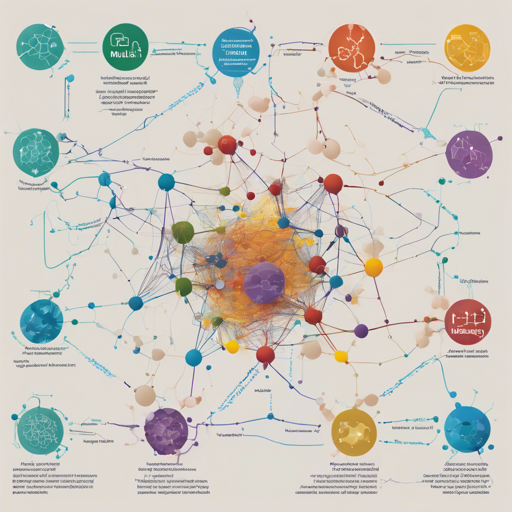

The model has undergone training and evaluation using diverse datasets, which can be visually categorized by color as illustrated below. The yellow and blue datasets typically represent single-domain tasks that require either regression or classification outputs in the target domain (natural language or SMILES strings). The gradients transitioning from yellow to blue indicate cross-domain generation tasks, which involve converting natural language inputs into SMILES outputs or the other way around.

Model Usage Guide

To utilize the model for inference, simply follow the steps outlined below:

- Preprocess the input by replacing the atom tokens with special tokens.

python

from transformers import AutoModelForSeq2SeqLM, AutoTokenizer

import re

from rdkit.Chem import MolFromSmiles

import string

from rdkit import RDLogger

RDLogger.DisableLog(rdApp.*)

atoms_tokens = [Ag,Al,As,Au,B,Ba,Bi,Br,C,Ca, Cd,Cl,Co,Cr,Cs,Cu,F,Fe,Ga,Gd,Ge,H,Hg,I,In,K,Li,M,Mg,Mn, Mo,N,Na,O,P,Pt,Ru,S,Sb,Sc,Se,Si,Sn,V,W,Z,Zn,c,e,n,o,p,s]

atoms_tokens = sorted(atoms_tokens, key=lambda s: len(s), reverse=True)

SMI_REGEX_PATTERN = r([]().=#-+:~@??*$%[0-9]2[0-9] + .join(atoms_tokens) + )

regex = re.compile(SMI_REGEX_PATTERN)

def clean_output_sequence(output_sequence):

return output_sequence.replace(s, ).replace(sm_, ).replace( sm_, ).replace(, ).strip()

def add_special_symbols(text):

output = []

for word in text.split():

tokens = [token for token in regex.findall(word)]

if len(tokens) 4 and (word == .join(tokens)) and MolFromSmiles(word):

output.append(.join([sm_+t+ for t in tokens]))

else:

output.append(word)

return .join(output)

PROMPT = "Given the following reactants and reagents, please provide a possible product. CCN(CC)CC.CCN=C=NCCCN(C)C.CN(C)C=O.Cl.NC1=CC=C(Cl)C=C1N.O.O=C(O)CCCCCNC(=O)C=C1C2=CC=CC=C2C2=CC=CC=C12.OC1=CC=CC2=C1N=NN2.[Cl-].[Na+]"

PROMPT = add_special_symbols(PROMPT)

- Load the model checkpoint.

python

model = AutoModelForSeq2SeqLM.from_pretrained('insilicomedicine/nach0_base')

tokenizer = AutoTokenizer.from_pretrained('insilicomedicine/nach0_base')

- Generate a response to the prompt and replace special tokens with corresponding atom tokens.

python

input_text_ids = tokenizer(PROMPT, padding='longest', max_length=512, truncation=True, return_tensors='pt')

generated_text_ids = model.generate(**input_text_ids, do_sample=True, top_k=100, top_p=0.95, max_length=512)

generated_text = tokenizer.batch_decode(generated_text_ids, skip_special_tokens=True)[0]

generated_text = clean_output_sequence(generated_text)

# NC1=CC=C(Cl)C=C1NC(=O)CCCCCNC(=O)C=C1C2=CC=CC=C2C2=CC=CC=C12

Usage for Large Model Version

For utilizing the large model version for inference, please refer to the NeMo project documentation. The simplest way to use the large version of the model is to execute the script megatron_t5_seq2seq_eval.py or megatron_t5_seq2seq_finetune.py. Prior to executing the script, ensure you prepare the input (prompts) and output (responses) files and configure the config file accordingly.

- Prepare the input file with prompts on each line, ensuring to preprocess them using the

add_special_symbolsfunction outlined above. - In the configuration file, set the input and target files, configure the checkpoint path, enable prediction writing, and define output file prefix fields.

After completing these steps, execute the script to perform inference!

Usage and License

Please note that all model weights are exclusively licensed for research purposes. The accompanying dataset is licensed under CC BY 4.0, which allows non-commercial usage only. We strongly encourage all users to adhere to the highest ethical standards when leveraging our models, ensuring fairness, transparency, and responsibility in their research pursuits. Any applications that may result in harm or adversely affect society are strictly prohibited.

References

If you utilize our repository, kindly cite the following related paper:

@article{D4SC00966E,

author = {Livne, Micha and Miftahutdinov, Zulfat and Tutubalina, Elena and Kuznetsov, Maksim and Polykovskiy, Daniil and Brundyn, Annika and Jhunjhunwala, Aastha and Costa, Anthony and Aliper, Alex and Aspuru-Guzik, Alán and Zhavoronkov, Alex},

title = {nach0: multimodal natural and chemical languages foundation model},

journal = {Chem. Sci.},

year = {2024},

volume = {15},

issue = {22},

pages = {8380-8389},

publisher = {The Royal Society of Chemistry},

doi = {10.1039/D4SC00966E},

url = {http://dx.doi.org/10.1039/D4SC00966E}

}Troubleshooting Ideas

If you encounter issues while using the model, consider the following troubleshooting tips:

- Ensure you have all necessary dependencies installed. Any missing libraries could lead to runtime errors.

- Check the versions of libraries such as

transformersandrdkitto ensure compatibility with the model. - Double-check your input formatting. Incorrect formatting could lead to undesirable results.

- If any errors arise while loading the model, ensure your checkpoint paths are correct.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.