Welcome to the exciting world of deep reinforcement learning with Stable-Baselines3! In this article, we’ll delve into using a trained PPO-MLP (Proximal Policy Optimization with a Multi-Layer Perceptron) agent to play the challenging LunarLander-v2 game.

Understanding the Background

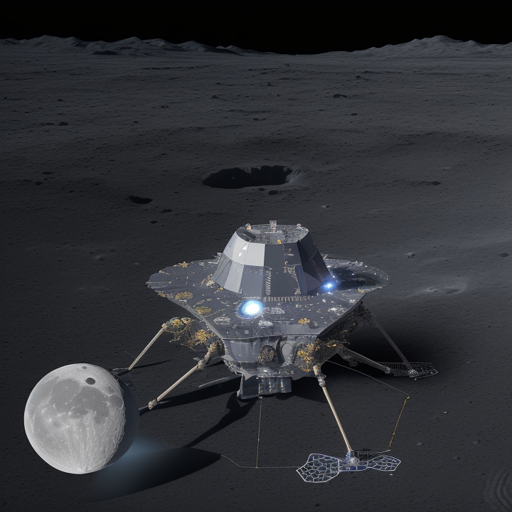

The LunarLander-v2 task is a popular benchmark for reinforcement learning agents, simulating the landing of a lunar module on the moon’s surface. The goal is to land the module safely while minimizing fuel consumption through various maneuvers. The PPO algorithm is a favored choice for training agents in this environment due to its efficient and stable approach to policy optimization.

Setting Up Your Environment

Before we jump into the coding part, ensure that you have the necessary packages installed:

- Python

- Stable-Baselines3

- Hugging Face Libraries

You can use the following commands in your terminal or command prompt to install the required libraries:

pip install stable-baselines3 huggingface-hubCreating a PPO-MLP Agent

Now let’s create and utilize a PPO-MLP agent for LunarLander-v2.

from stable_baselines3 import PPO

from huggingface_sb3 import load_from_hub

# Load the pretrained model from Hugging Face

model = load_from_hub("PPO-MLP", "LunarLander-v2")

# Now you can test your agent in the LunarLander-v2 environment

env = "LunarLander-v2"

obs = env.reset()

for _ in range(1000):

action, _states = model.predict(obs)

obs, rewards, dones, info = env.step(action)

env.render()Explanation of the Code

To better understand the code snippet provided, let’s use an analogy of a chef in a kitchen:

- Importing Libraries: Think of this as gathering all your ingredients and tools before starting to cook. You’re making sure you have the right resources to create a delicious dish.

- Loading the Model: This is like pulling out a recipe book with a special recipe that has already been tested. You load the information needed to replicate it flawlessly.

- Setting Up the Environment: Preparing your kitchen to start cooking. This means creating the space (the LunarLander-v2 environment) where your agent will ‘cook’ (perform actions).

- Predicting Actions: Here, the agent decides what ingredients to use for each step based on its learned experience, just like a chef deciding how to flavor your meal.

- Rendering the Environment: This involves presenting your dish to guests, showcasing what the agent is actively doing in the simulation.

Troubleshooting Tips

If you encounter any issues while using this setup, consider the following troubleshooting steps:

- Check if all necessary libraries are installed correctly. If you see any “ModuleNotFoundError,” try re-installing the required package.

- Make sure that you have access to the Hugging Face model hub and that your internet connection is stable.

- If you’re facing rendering issues, ensure your graphical libraries are up to date, as this can affect how the LunarLander environment displays.

- For performance and reward issues, try adjusting hyperparameters such as learning rate and batch size in the PPO model.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.

The Conclusion

With this guide, you should be well-equipped to start your journey in deep reinforcement learning using the PPO-MLP agent in the LunarLander-v2 environment. Experiment, learn, and don’t hesitate to tweak parameters to see how they influence your agent’s performance.

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.