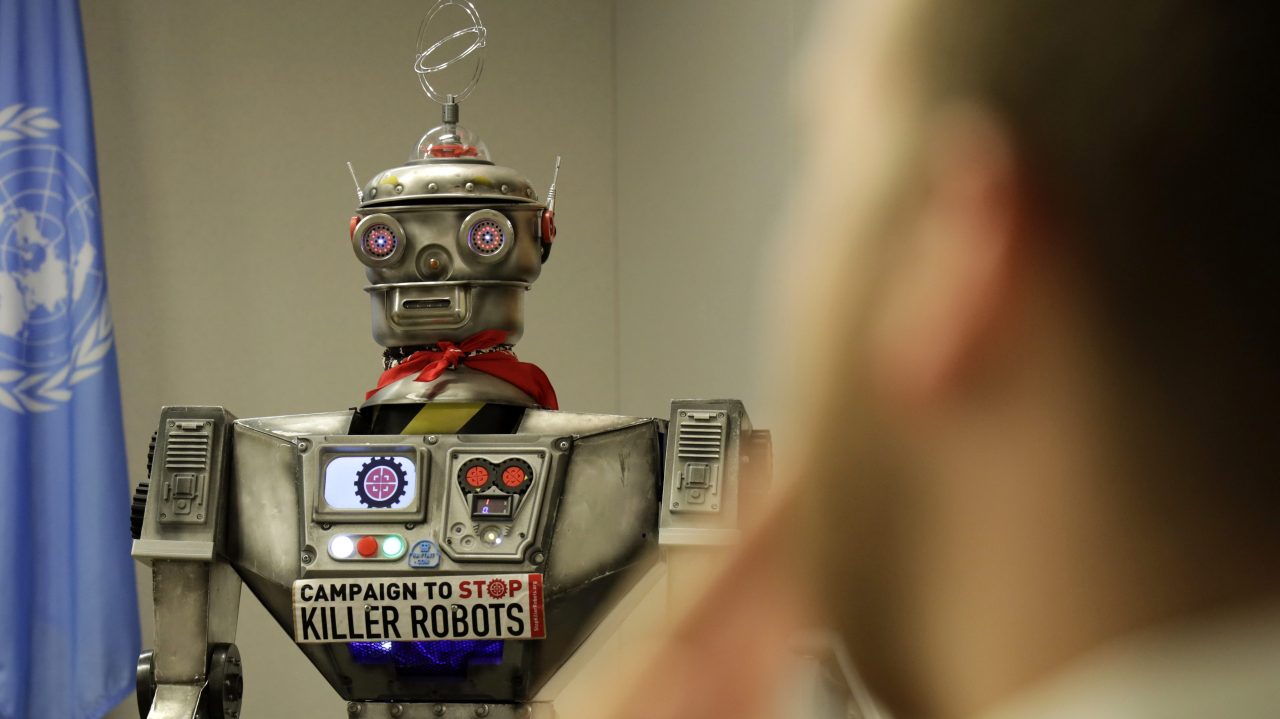

As technology evolves, so does its implication in our daily lives, particularly in law enforcement. Recently, San Francisco’s police policy regarding “killer robots” stirred intense debate among residents, civic leaders, and activists. Initially approved, this policy has now been put on hold, opening a critical dialogue on the ethics and ramifications of deploying autonomous machines in high-stakes situations. This blog delves into the nuances of the controversy, the reasons behind the backlash, and what this means for the future of policing.

The Policy Unveiled

The original policy afforded the San Francisco Police Department (SFPD) the ability to use robots equipped with explosives in life-threatening scenarios. The resolution was supported by a vote from the board of supervisors, citing that technology could be a valuable tool in mitigating imminent dangers. However, the specifics of the proposal raised red flags:

- Robots could potentially be deployed in various situations, including criminal apprehensions and critical incidents.

- The use of lethal force was only permissible when the risk of loss of life to public members or officers was deemed “imminent.”

- Only certain functions of the robots were sanctioned, positioning them largely for emergency responses.

This policy, however, lacked precedents, igniting fears of how this technology could normalize lethal force by machines in future law enforcement actions.

Community Backlash and Ethical Considerations

Opposition to the proposal quickly materialized, driven by the broader implications of robotic law enforcement in society. Critics articulated their concerns about:

- Autonomy in Decision-Making: Can machines adequately assess moral, ethical, and situational nuances to make life-and-death decisions?

- Escalation of Violence: Does the adoption of ‘killer robots’ pave the way for increased militarization of the police?

- Accountability: In the event of a miscalculation or misuse of robots, who carries the responsibility for unintended consequences?

Hillary Ronen, one of the supervisors who opposed the original vote, emphasized that “common sense prevailed” in the collective urging to pause the measure. This popular sentiment underscores a critical point: the rise of technology in policing raises serious ethical dilemmas that are yet to be resolved.

The Road Ahead: What’s Next for the Policy?

The subsequent decision to halt the approval sends a clear message: stakeholders must deliberate more comprehensively about the intertwining of technology and law enforcement. The policy has been referred back to committee for further evaluation, ensuring that diverse perspectives are taken into account before making any definitive moves. Lawmakers and community leaders will need to grapple with the following:

- How can public safety be balanced with ethical use of technology?

- What frameworks can be established to govern the implementation of robotic capabilities in policing?

Conclusion: A Call for Thoughtful Oversight

As cities grapple with the potential benefits and pitfalls of robotic law enforcement, the San Francisco case represents a microcosm of a much larger dilemma faced across the globe. While the prospects of technology augmenting public safety are enticing, the crucial aspect remains the safeguarding of civil rights and ethical considerations. If policymakers are to enable progress, they must do so with a balanced approach and universal public accountability.

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations.

For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.