In the ever-evolving landscape of communication technologies, platforms often find themselves grappling with the darker sides of their user-generated content. Telegram, a messaging service that prides itself on privacy and security, has recently been spotlighted for a very different reason. A deep-dive analysis by The New York Times revealed that the platform is “inundated” with illegal and extremist activities, raising critical questions about its content moderation practices and the responsibilities of digital communication providers.

The Extent of the Problem

The investigation sifted through more than 3.2 million messages across 16,000 channels, uncovering a concerning prevalence of harmful content. Here are some alarming highlights from the findings:

- About 1,500 channels are linked to white supremacist ideologies.

- Dozens of channels operate as clandestine markets selling weapons.

- Drug trafficking is rampant, with at least 22 channels promoting the delivery of MDMA, cocaine, and heroin.

These revelations are troubling, particularly in a world where the rapid spread of information can lead to real-world consequences. The estimated millions of messages exchanging extremist views could fuel harmful activities, impacting various communities globally.

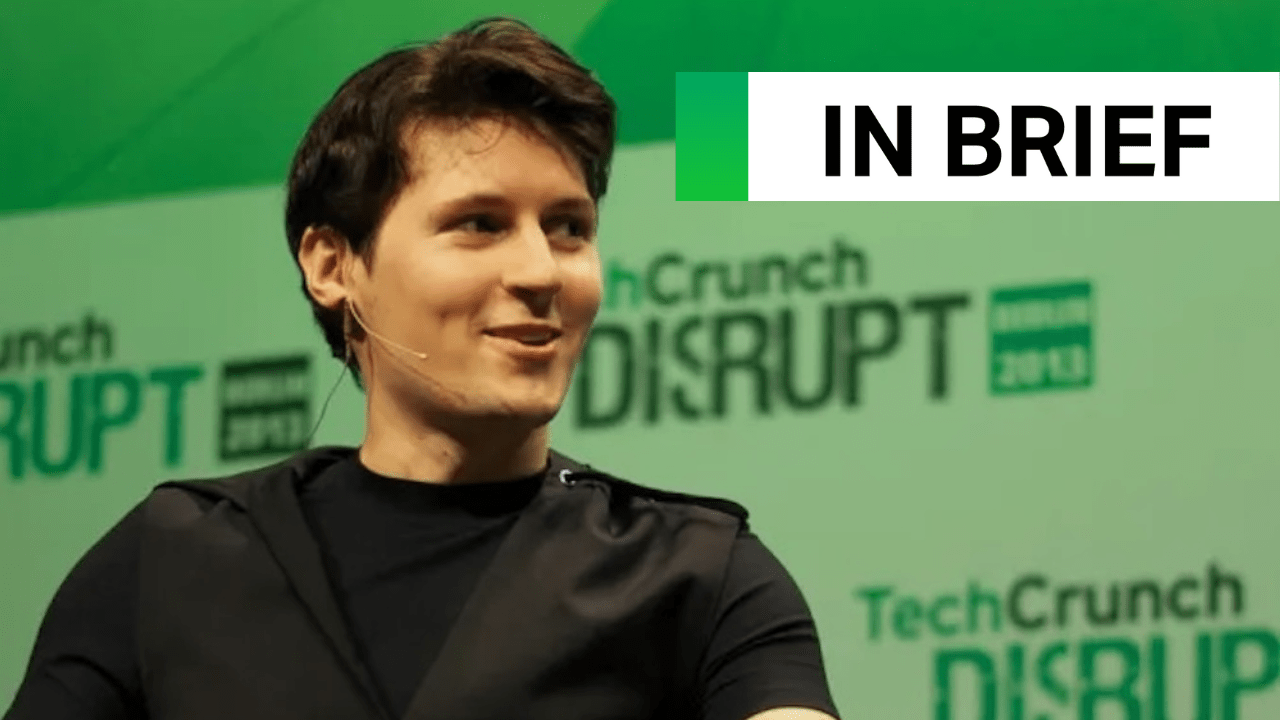

Pavel Durov: The Face of Controversy

Pavel Durov, founder and CEO of Telegram, finds himself at the center of this controversy, especially following his recent arrest in France. The authorities suggest that Durov’s alleged negligence regarding content moderation makes him complicit in the illegal activities proliferating across the platform. In response, Durov took to social media, asserting that the laws used to pin liability on him are archaic, arguing, “Using laws from the pre-smartphone era to charge a CEO with crimes committed by third parties on the platform he manages is a misguided approach.”

Content Moderation: An Ongoing Dilemma

The situation underscores a pivotal dilemma for many tech companies: finding the balance between user privacy and ensuring platform safety. In an attempt to address these concerns, Telegram recently updated its website to facilitate abuse reports, indicating that the company may be taking steps to improve its oversight practices. But will these measures be sufficient? Critiques abound regarding how effectively platforms like Telegram can monitor content without infringing on users’ freedoms.

The Future of Communication Platforms

The implications of this controversy extend beyond just Telegram. As more users migrate to platforms that emphasize privacy, there lies a genuine risk that these spaces can inadvertently foster extremist communities and illegal activities. The evolving nature of an interconnected society demands that we rethink how these communication platforms operate, urging more robust methods to prevent abuse while respecting user rights.

Conclusion: Towards a Safer Digital Environment

Telegram’s recent challenges highlight an urgent need for policy reform in online communication environments. The dynamics of free speech versus safety require careful consideration and action from both platform owners and regulatory bodies. As we continue to witness the impact that extremist content can have in our communities, it is crucial for messaging platforms to innovate their moderation strategies effectively.

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations. For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.