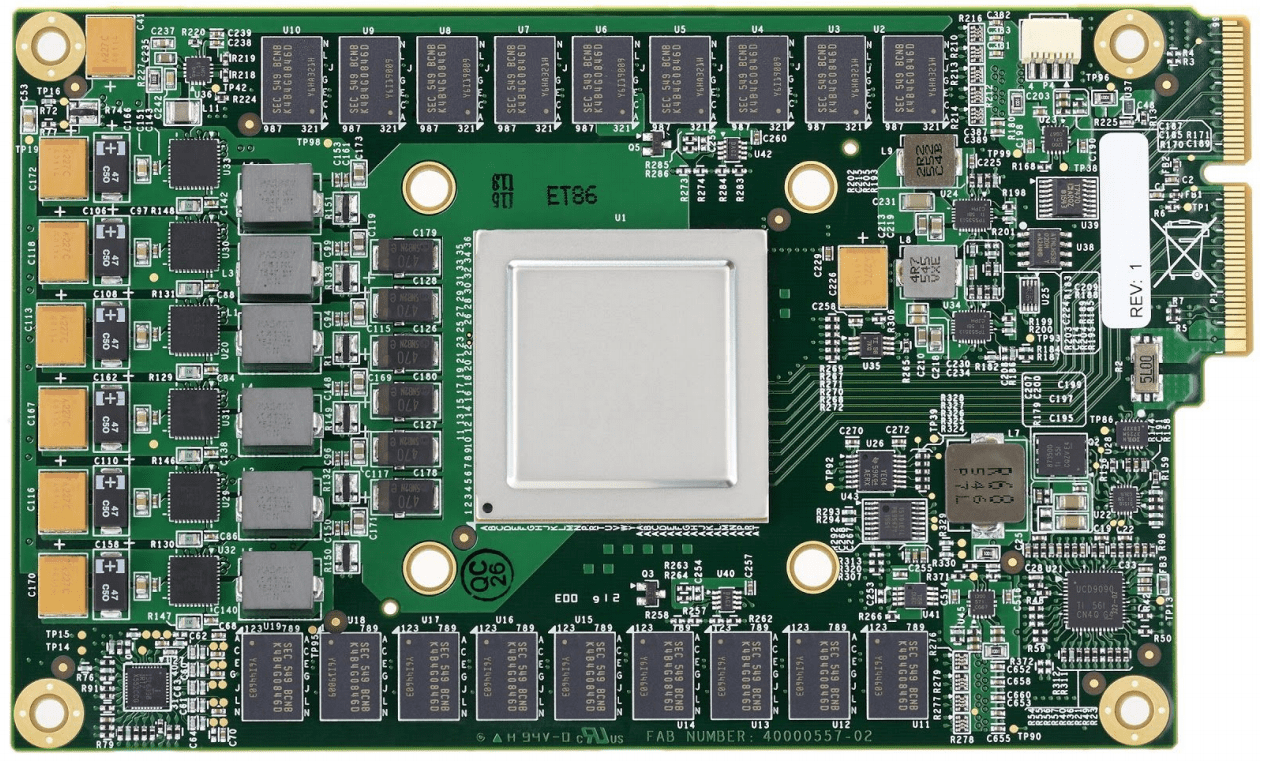

In an era defined by rapid advancements in artificial intelligence, the tools and technologies that facilitate these innovations are constantly evolving. One of the most groundbreaking developments has come from none other than Google, whose commitment to enhancing machine learning has resulted in the creation of the Tensor Processing Unit (TPU). These custom chips have captured the attention of researchers and developers alike, showing promise far exceeding that of traditional CPUs and GPUs. Let’s dive into the remarkable features of TPUs, their impact on machine learning, and the future prospects they herald.

Understanding TPUs: The Backbone of Google’s AI

Since their debut at Google’s I/O developer conference in May 2016, TPUs have been designed specifically to accelerate machine learning workloads, primarily optimized around Google’s open-source TensorFlow framework. What sets them apart is their unparalleled speed; according to Google’s benchmarks, TPUs have been reported to be 15 to 30 times faster than standard GPU/CPU combinations. This dramatic improvement is crucial, especially in production environments where processing efficiency translates directly to cost savings and performance.

Efficiency and Performance: The TeraOps/Watt Advantage

Another critical aspect of TPUs is their power efficiency. Google has implemented chips that not only enhance speed but also significantly reduce power consumption. TPUs offer a TeraOps/Watt ratio that is 30 to 80 times higher than traditional hardware setups. This remarkable efficiency means data centers can handle larger workloads without a corresponding spike in energy costs, making TPUs a game-changer for organizations operating at scale.

Machine Learning Workloads: Optimizing for More than Just CNNs

- Multi-layer Perceptrons (MLPs): Contrary to popular belief, not all machine learning applications rely heavily on convolutional neural networks (CNNs), which are often favored for image recognition tasks. Google’s research shows that MLPs constitute the majority of its data center workloads, challenging the notion that TPUs are solely for deep learning applications.

- Diverse Applications: The adaptability of TPUs allows them to cater to a wide range of machine learning models, demonstrating that performance should not be limited to one specific application area.

The Journey to Custom ASICs: Google’s Vision and Execution

The inception of TPUs traces back to 2006 when Google initially explored various hardware options like GPUs and FPGAs. However, it wasn’t until 2013 that the urgency for a more specialized solution became clear. By anticipating a surge in deep neural network (DNN) demands, Google initiated a high-priority project to develop custom ASICs with a vision of enhancing cost performance tenfold compared to GPUs. This foresight has paid dividends, positioning Google as a leader in machine learning infrastructure.

Future Implications: What Lies Ahead?

Though Google currently keeps TPUs within its own cloud service, there’s a broader implication for the tech industry as a whole. Google’s design and implementation of TPUs are likely to inspire other organizations to innovate further, creating successors that could enhance performance and efficiency even more. As the demand for machine-learning solutions escalates, so too will the need for custom hardware that can meet the challenges posed by burgeoning datasets and complex algorithms.

Conclusion: A Leap Ahead in AI Processing

In conclusion, Google’s Tensor Processing Units represent a significant advance in the landscape of artificial intelligence. By focusing on speed and power efficiency, TPUs provide a powerful foundation for the next generation of machine learning applications. As other tech players strive to catch up or innovate further, it’s clear that the era of custom hardware for AI processing is just beginning.

At fxis.ai, we believe that such advancements are crucial for the future of AI, as they enable more comprehensive and effective solutions. Our team is continually exploring new methodologies to push the envelope in artificial intelligence, ensuring that our clients benefit from the latest technological innovations. For more insights, updates, or to collaborate on AI development projects, stay connected with fxis.ai.